Google having a hiccup in Colombia

Today google is having a hiccup in Colombia. Users accessing www.google.com are having the following result:

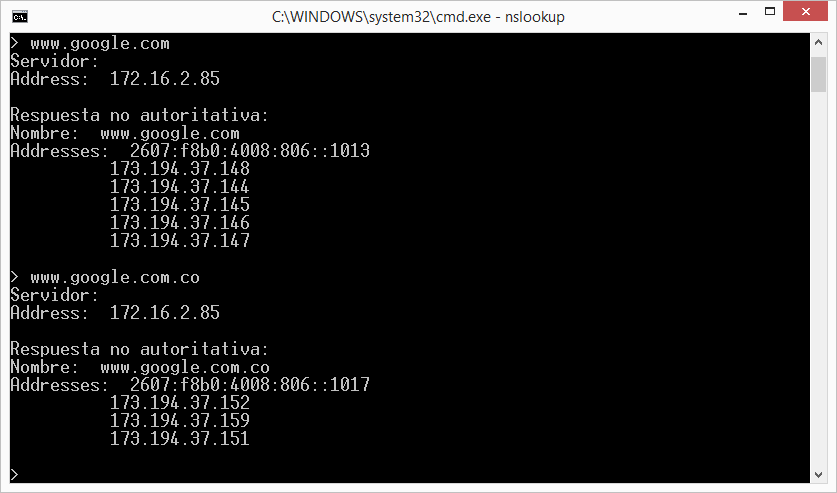

That looked weird. I was wondering if it was some kind of DNS spoofing attack, but it's not. www google.com.co is working ok, but not www.google.com. Both of them are in the same netblock:

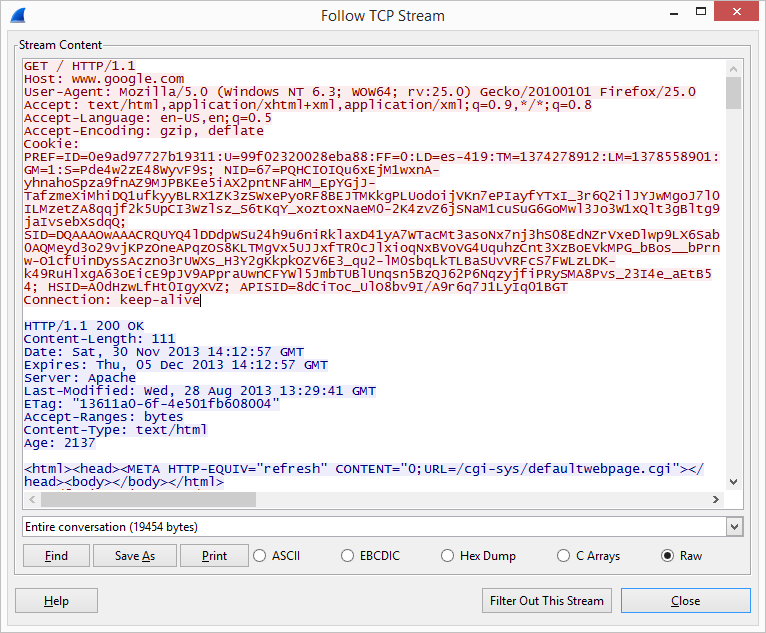

TCP stream of packet capture shows a redirection to a non-existent file:

Full packet capture of this problem can be downloaded here.

Are you noticing the same problem? Please contact us!

Manuel Humberto Santander Peláez

SANS Internet Storm Center - Handler

Twitter: @manuelsantander

Web:http://manuel.santander.name

e-mail: msantand at isc dot sans dot org

A review of Tubes, A Journey to the Center of the Internet

While not immediately or obviously related to information security, I was so profoundly affected by my recent read of Andrew Blum's (@ajblum) Tubes, A Journey to the Center of the Internet, I believe it warrants a review for the Internet Storm Center readership. Remember an Internet ice age ago (2006) when Senator Ted Stevens described the Internet as "a series of tubes?" To this day it's a well recognized Internet meme, but Blum's Tubes goes a long way towards establishing discernible credibility for the good Senator's declaration. In this extraordinarily well-written exploration of the Internet's physical infrastructure he acknowledges that "one thing it most certainly is, nearly everywhere, is, in fact, a series of tubes."

Even as an engineer and analyst who has spent many years helping defend one of the world's largest Internet presences I found Blum's book extremely refreshing and deeply insightful. I've been in some of the very datacenters and Internet exchanges he describes and yet sadly have taken for granted that, as this book forces you to recognize, the Internet is a physical, living thing and does indeed flow through tubes.

Blum manages to convey a true sense of the physicality of the Internet while asking lucid questions regarding its core nodes and links. Of a router he ponders that which is invisible to the naked eye. "What was the physical path in there? And what might that tell me about how everything else is connected? What was the reductio ad absurdum of the tubes?" Heady stuff to be sure, but he continues a few pages later indicating that on his journey to the center of the Internet his bare common turned out to be the router lab, and that what he saw "was not the essence of the Internet but its quintessence-not the tubes, but the light." That light, that quintessence, is all about the fiber, the glass tendrils, that make up our incredibly connected world. What Blum's book requires of you to consider and always rememeber is how physical that connectivity really is.

Blum's exploration of the Internet is an actual walk through major network exchanges (PAIX, LINX) and providers (Equinix), to the cable landing station near Land's End in the UK where endless fiber connections terminate before their transoceanic journey via cables to points world wide. He spends time in datacenters near and dear to my heart in the Columbia River Valley while encapsulating the work of visionary people whose work I've long admired, such as Michael Manos.

Blum's digital safari even occasionally wanders into the darker corners of the Internet where much of my worry focuses.

First, he expresses nervousness regarding the "concentrated" nature of the exchanges and datacenters that serve as the core hubs of our Internet and wonders if it's responsible to explore and describe their physicality at such length. Yet, he discovers and concludes that there is an openness derived from the Internet's legendary robustness. "Well designed networks have redundancies built in; in the event of a failure at a single point, traffic would quickly route around it." He points out that one of the more significant threats to the Internet "is an errant construction backhoe." I can't tell you how many times we've lost connections due to fiber cuts while unrelated construction was underway that directly intersected our physical paths, or an anchor dragged the ocean floor in just the right (wrong) spot.

Second, Blum calls into question some of the "disingenuous" and "feigned obscurity" of the cloud "which asks us to believe that our data is an abstraction, not a physical reality." He voices his frustration further referring to that "feigned obscurity" as a malignant advantage of the cloud as it practically demands our ignorance with a "we'll take care of that for you" attitude. Blum points out that our data is always somewhere, often in two or more places, and that we should know where it is. He asserts that a basic tenet of today's Internet is that if "we're entrusting so much of who we are to large companies, they should entrust us with a sense of where they're keeping it all, and what it looks like."

Weigh this premise against revelations brought to light via Snowden's disclosures and you've got some deeper thinking to do as to the true nature of your data and expectations of privacy.

Tubes, A Journey to the Center of the Internet, is quite simply the best book I've read in a very long time. Blum's ability to converge technology and wit, data and philosophy, and dare I say, humanity with the Internet, transcends information security or network engineering. Blum is a gifted writer whose eloquent storytelling and turn of phrase bring many positive returns.

Tubes reminds us that the "Internet is made up of pulses of light" and while those pulses might seem miraculous, they're not magic. Blum asks that we remember that the Internet exists, that it has a physical reality and an essential infrastructure. In his effort to "wash away the technogical alluvium of contemporary life in order to see - fresh in the sunlight - the physical essence of our digital world", Blum succeeds well beyond words that my simple review can convey.

Treat yourself, and a friend or loved one, to Tubes this holiday season and enjoy; you will experience the Internet in a wholly different light.

1 Comments

Microsoft Security Advisory (2914486): Vulnerability in Microsoft Windows Kernel 0 day exploit in wild

Fireeye posted a story earlier today outlining a zero day affecting XP and Windows 2003:

http://www.fireeye.com/blog/technical/cyber-exploits/2013/11/ms-windows-local-privilege-escalation-zero-day-in-the-wild.html

Microsoft has followed it up with a matching post:

Microsoft Security Advisory (2914486): Vulnerability in Microsoft Windows Kernel Could Allow Elevation of Privilege:

https://technet.microsoft.com/en-us/security/advisory/2914486

Note that the temporary fix outlined breaks some windows features, specifically some IPSEC VPN functions.

The real story here isn't the zero day or the workaround fix, or even that Adobe is involved. The real story is that this zero day is just the tip of the iceberg. Malware authors today are sitting on their XP zero day vulnerabilities and attacks, because they know that after the last set of hotfixes for XP is released in April 2014 (which we're now officially calling "WinMageddon"), that their exploits will work forever against hundreds of thousands (millions?) of XP workstations. ( http://windows.microsoft.com/en-us/windows/lifecycle )

If you are still running Windows XP, there is no project on your list that is more important than migrating to Windows 7 or 8. The "never do what you can put off until tomorrow" project management approach on this is on a ticking clock, if you leave it until April comes you'll be migrating during active hostilities.

===============

Rob VandenBrink

Metafore

3 Comments

ATM Traffic + TCPDump + Video = Good or Evil?

I was working with a client recently, working through the move of a Credit Union branch. In passing, he mentioned that they were looking at a new security camera setup, and the vendor had mentioned that it would need a SPAN or MIRROR port on the switch set up. At that point my antennae came online - SPAN or MIRROR ports set up a session where all packets from one switch ports are "mirrored" to another switch port. Needless to say, this is not something you want to do for fun and giggles in a bank!

Anyway, I asked what the SPAN port was for, and the answer was that the camera server is set to capture the packets from the ATMs. Apparently this has full support from the banking service providers and law enforcement, as it allows them to tie a fraudulent/suspect ATM transaction directly to the associated video clip of the person keying it in. No tedious fast forward / reverse on the video, and hoping your timestamps all match up.

OK, but at that point it started to sound bad-bad-bad from a PCI perspective. The question in my mind at that point was - did the banking provider give up their encryption keys to the video company, or is the ATM transaction data not as encrypted as it should be?

So, time to break out wireshark and take a look? The answer to that question would be a resounding NO. It was time to ask for PERMISSION to capture a test transaction. If you've taken SANS SEC504 or SEC560 (or any of several other classes), you know that some days, the biggest difference between a successful security consultant and a potential convict is permission.

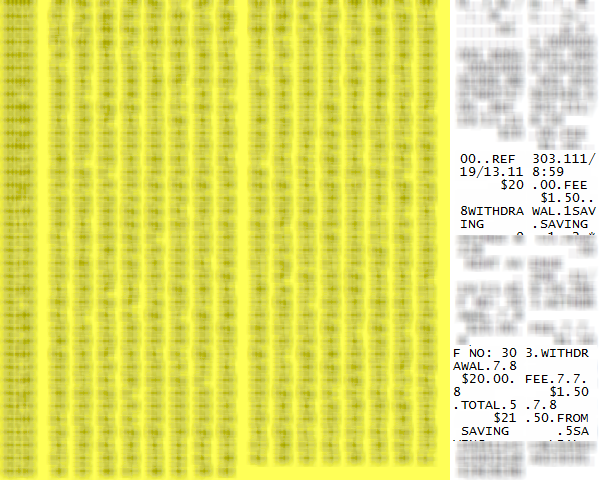

A week later, with permission in hand and my client with me, we did couple of $20 withdrawals and caught them "on film". What we found was a 3rd possibility, and if I had thought it through I should have anticipated this result. The transaction was all encrypted as we expected on tcp/3002, or at least obfuscated enough that the encoding wasn't obvious (I'll be digging a bit more into that, stay tuned). But when it came time to print the receipt, the exact character-by-character text of the receipt printout is in clear 8-bit-ASCII text, all in one packet (including the required CRLFs, spaces and tabs) . And when you opt to not print a transaction receipt, that "receipt packet" is still sent.

If you've ever looked closely at an ATM receipt, the date, time, ATM identifier and often the street address will be printed, along with the dollar amounts and specifics of the transaction. But the actual account identifiers are mostly represented by "*" characters, with just enough of the number exposed so that you can verify that things happened as they should. Most of the captured packet below is blurred out (sorry, but it's against my actual account), but you can see some of the text clearly, and it's exactly as printed on the paper receipt.

As a side note, if you ever are in a position to look at a capture of this type though, you might find TCPDUMP easier to work with than Wireshark - Wireshark wants to decode this as IPA (GSM over IP), and of course that means all the decodes are messed up. It's much easier in cases like this to just look at the hex representations of the packets.

So the stuff we really want protected is still protected, but there's enough exposed in that receipt packet that our friends in law enforcement can tie the video to the transaction, with help from the folks on the banking side. A reasonable compromise I think, but take a look at your receipt next time you make an ATM withdrawal.

Are you OK with the data on that slip of paper being sent in the clear?

If you are a QSA, are you still OK with it?

If I've missed anything, or if you've got an opinion (in either direction) on this, please use our comment form to post!

===============

Rob VandenBrink

Metafore

6 Comments

More Bad Port 0 Traffic

Thanks to an alert reader for sending us a few odd packets with "port 0" traffic. In this case, we got full packet captures, and the packets just don't make sense.

The TTL of the packet changes with source IP address, making spoofing less likely. The TCP headers overall don't make much sense. There are packets with a TCP header length of 0, or packets with odd flag combinations. This could be an attempt to fingerprint, but even compared to nmap, this is very noisy. The packets arrive rather slow, far from DDoS levels.

Here are a couple samples (I anonymised the target IP). Any hints as to what could cause this are welcome.

IP truncated-ip - 4 bytes missing! (tos 0x0, ttl 52, id 766, offset 0, flags [DF], proto TCP (6), length 88)

94.102.63.55.0 > 10.10.10.10.0: tcp 68 [bad hdr length 0 - too short, < 20]

0x0000: 4500 0058 02fe 4000 3406 91f1 5e66 3f37

0x0010: 0a0a 0a0a 0000 0000 55c3 7203 0000 0000

0x0020: 0c00 0050 418b 0000 6e82 ef01 0000 0000

0x0030: 25b0 ce4b 0000 0000 a002 3cb0 9a8b 0000

0x0040: 0204 0f2c 0402 080a 0005 272d 0005 272d

0x0050: 0103 0300

IP truncated-ip - 4 bytes missing! (tos 0x10, ttl 47, id 28629, offset 0, flags [DF], proto TCP (6), length 60)

46.137.48.107.0 > 10.10.10.10.0: Flags [P.UW] [bad hdr length 56 - too long, > 40]

0x0000: 4510 003c 6fd5 4000 2f06 68cf 2e89 306b 0x0010: 0a0a 0a0a 0000 0000 51a9 89b8 0000 0000 0x0020: e6b8 0050 b315 0000 ec67 0d66 0000 0000 0x0030: 0000 0000 0000 0000

IP truncated-ip - 4 bytes missing! (tos 0x80, ttl 51, id 45284, offset 0, flags [DF], proto TCP (6), length 60)

186.202.179.99.0 > 10.10.10.10.0: Flags [SUW], seq 1603085765, win 27016, urg 0, options [[bad opt]

0x0000: 4580 003c b0e4 4000 3306 1416 baca b363

0x0010: 0a0a 0a0a 0000 0000 5f8d 25c5 0000 0000

0x0020: aba2 6988 23fa 0000 f271 af2a 0000 0000

0x0030: 0000 0000 0000 0000

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

6 Comments

Planning for Failure

I have been witness to network and system security failure for nearly two decades. While the players change and the tools and methods continue to evolve, it's usually the same story over and over: eventually errors add up and combine to create a situation that someone finds and exploits. The root-cause analysis, or post mortem, or whatever you call it in your environment consists of a constellation-of-errors, or kill-chain, or what-have-you. It's up to you do develop an environment that both provides the services that your business requires and has enough complementary layers of defenses to make incidents either a rare occurrence or a non-event.

Working against you are not only a seemingly endless army of humans and automata, but also the following truths or as I call them "The Three Axioms of Computer Security":

- There will always be a new vulnerability.

- AV will always be out of date.

- Users will always click on a link.

How do you create a network that can survive under these conditions? Plan on it happening.

In your design, account for the inevitable failure of other tools and layers. Work from the outside in: Firewall, WAF, Webserver, Database server. Work from the bottom up: Hardware, OS, Security Tools, Application.

I'm imagining the typical DMZ layout for this hypothetical design: External Firewall, External services (DNS, Email Web,) Internal Firewall, Internal services (back office, file/print share, etc.) This is your typical "defense in depth" layout that incorporates the assume-failure philosophy a bit. It attempts to isolate the external servers, which the model assumes are more likely to be compromised, from the internal servers. This isn't the correct assumption for modern exploit scenarios, so we'll fix that as we go through this exercise.

Nowadays, External Firewalls basically fail right off the bat in most exploit scenarios. It has to let in DNS, SMTP, and HTTP/HTTPS. So if it's not actively blocking source IPs in response to other triggers in your environment, it's only value-ad in protecting these services comes from it acting as a separate, corroborating log source. But it's completely necessary, because without it, you open your network up to direct attack on services that you might not be aware that you're exposing. So, firewalls are still a requirement, but keep in mind that it's not covering attacks coming in on our exposed applications. It creates a choke point on your network that you can exploit for monitoring and enforcement (it's a good spot for your IDS which can inform the firewall to block known malicious traffic.) This is all good strategy, but focusing on the threat from the outside.

However, if you plan your firewall strategy with the assumption that other layers will eventually fail, you'll configure the firewall so that outbound traffic is also strongly limited to just the protocols that it needs. Does your webserver really need to send out email to the internet? Does it need to surf the internet? While you may not pay much attention to the various incoming requests that are dropped by the firewall, you need to pay critical attention to any outbound traffic that is being dropped.

The limitation of standard firewalls is that they don't perform deep packet inspection and are limited to the realm of IP addresses and ports for most of their decision making. Enter the Application Firewall, or Web Application Firewall. I'll admit that I have very little experience with this technology, however I do know how to not use it in your environment. PCI requirement 6.6 states that you should secure your web applications either through Application Code Reviews or Application Firewalls. (https://www.pcisecuritystandards.org/pdfs/infosupp_6_6_applicationfirewalls_codereviews.pdf) It should be both, not either/or because you have to assume that the WAF will fail or that code review will fail.

The next device is the application (DNS, email, web, etc.) server. If you're assuming that the firewall and WAF will fail, where should you place the server? It should be placed in the external DMZ, not inside your internal network "because it's got a WAF in front of it.) We'll also take an orthogonal turn in our tour, starting from the bottom of the stack and work our way up. IT managers generally understand hardware failure better than security failures. Multiple power supplies and routes, storage redundancy, backups, DR plans etc. The "Availability" in the CIA triad.

Running on your metal is the OS. Failure here is also mostly of the "Availability" variety, but security/vulnerability patching starts to come to play at this layer. Everybody patches, but does everybody confirm that the patches deployed? This is where a good internal vulnerability/configuration scanning regimen becomes necessary.

As part of your standard build, you'll have a number of security and management applications that run on top of the OS: your HIDS, AV and inventory management agents. Plan on these agents failing to check in, what is your plan to detect when an AV fails to check-in or update properly? Is your inventory management agent adequately patched and secured? While you're considering security failure, consider pre-deploying incident-response tools on your servers and workstations which will speed response-time when things eventually go wrong.

Next is the actual application. How will you mitigate the amount of damage the application process can do when it is usurped by an attacker? Chroot jail is often suggested, but is there any value to jailing the account running BIND if the servers sole purpose is DNS? Consider instead questions like: does httpd really need write access to webroot? Controls like selinux or tripwire come into play here, they're painful, but can mean the difference between a near-miss and the need to disclose a data compromise.

Also part of assuming that the application server will be compromised raises the need to send logs off of the server. This can help reduce the load on the server since network traffic is cheaper than disk writes. Having logs that are collected and timestamped centrally is a boon to any future investigation. It also allows better monitoring and you can leverage any indicators found from an investigation throughout your entire environment easier.

Now, switching directions again and heading to the next layer, the internal firewall. These days the internal firewall separates two hostile networks from one another. The rules have to enforce a policy that protects the servers from the internal systems, as well as the internal systems from the servers since either is just as likely to be compromised these days (some may argue that internal workstations, etc are more likely to fall.)

Somewhere you're going to have a database. It will likely contain stuff that someone will want to steal or modify. It is critical to limit the privileges on the accounts that interact with it. Does php really need read access to the password or credit card column? Or can it get by with just being able to write? It can be painful to work through all of the cases to get the requirements correct, but you'll be glad you did when you watch the "SELECT *" requests in your Apache access logs and you know they failed.

Workstations and other devices require a similar treatment. How much access do you need to do your job? Do you need data on the system, or can you interact with remote servers? Can you get away with application white-listing in your environment? I'm purposefully vague here because there are so many variables, however you have to ask how failures will impact your solution, and what you can do to limit the impact of those failures.

Despite all of these measures, eventually everything will fail. Don't feel glum, that's job security.

Learn from failure: instrument everything, and log everything. Disk is cheap. Netflow from your routers, logs from your firewalls, alerts from your IDS and AV, syslogs from your servers will give you a lot of clues when you have to figure out what went wrong. I strongly suggest full packet capture in front of your key servers. Use your WAF or load balancer to terminate the SSL session so you can inspect what's going into your web servers. Put monitors in front of your database servers too. Store as much as you can, use a circular queue and keep 2 weeks, 2 days, and have an easy way to freeze the files then you think you have an incident. It's a lot easier to create a system that can store a lot of files than it is to create a system that can recover packets from the past.

I know we sound negative all the time, pointing out why things won't work. That shouldn't be taken as an argument to not do something (e.g. AV won't protect you from X, so don't bother) instead it should be a reminder that you need to plan for what you do when it fails. Consider the external firewall, it doesn't block most of the attacks that occur these days, but you wouldn't want to not have one. Planning for failure is a valuable habit.

4 Comments

Apple not updating OS X Mountain Lion?

Larry Seltzer over at ZDNet has noticed that since the release of OS X Mavericks that Apple has stopped updating OS X Mountain Lion. Although Apple is not forthcoming with the reasons for this, it appears this is a departure from past behavior.

-- Rick Wanner MSISE - rwanner at isc dot sans dot edu - http://namedeplume.blogspot.com/ - Twitter:namedeplume (Protected)

4 Comments

Tales of Password Reuse

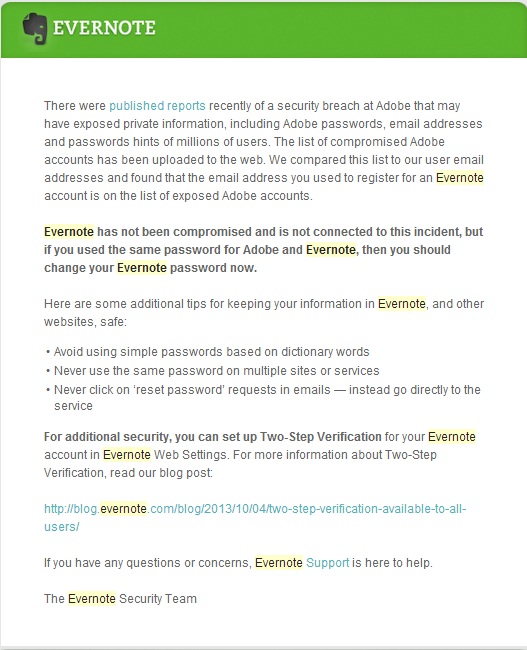

As a security practitioner I try really hard to drink the Kool-Aid, in other words practice what I preach. I have been a strong advocate, for well over a decade, of avoiding password reuse. There is one consolation I personally made to password reuse. For years I used one "throwaway" password for services where I didn't care about the account. You know those annoying sites that make you sign up just to access some mundane capability. In my case, my throwaway password is still a high quality password, but it is used on literally dozens of sites where there is no data of value, like Adobe. After the Adobe breach I changed my throwaway password on as many sites as I could remember using it at, and developed a better methodology for passwords on these sites (i.e. no more reuse).

Apparently I missed one. Yesterday I got an email from Evernote telling me that I had used the same password at Evernote that I had used at Adobe. The Evernote account probably got my throwaway password before I realized the value of the Evernote service. I now use Evernote nearly every day from my mobile devices; where I don't get prompted for the credentials; but never log into it over the web, so I didn't remember what the password was set to.

Needless to say I quickly changed my Evernote password and enabled Evernote's two-step authentication.

Shortly later an ISC reader forwarded a The Register article about a brute force authentication attack against github. While there aren't a lot of technical details in the article, this attack is interesting because it is a relatively slow attack from over 40,000 IP addresses, obviously designed to reduce the likelihood of any anti-brute-forcing controls kicking in.

"These addresses were used to slowly brute force weak passwords or passwords used on multiple sites. We are working on additional rate-limiting measures to address this". Suggesting that this was not your typical brute force employing obvious userids and incredibly inane passwords, but a targeted attack against password reuse.

The article also goes on to lament; "It strikes us that GitHub's recent bout of probing may stem from crackers using the 38 million user details that were sucked out of Adobe recently to check for duplicate logins on other sites."

Guess I will be looking at all my passwords again, including the ones used by my mobile devices!

-- Rick Wanner - rwanner at isc dot sans dot edu - http://namedeplume.blogspot.com/ - Twitter:namedeplume (Protected)

7 Comments

Port 0 DDOS

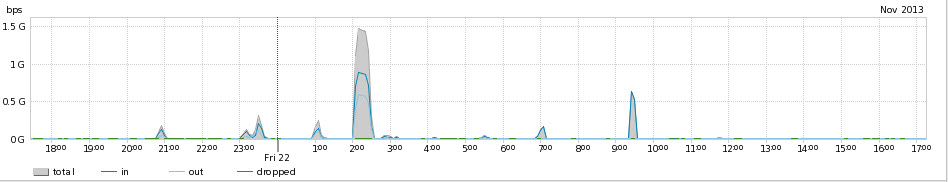

Following on the stories of amplification DDOS attacks using Chargen, and stories of "booters" via Brian Kreb's, I am watching with interest the increase in port 0 amplification DDOS attacks.

Typically these are relatively short duration, 15 to 30 minute, attacks aimed at a residential IP address and my speculation is that these are targeted at "booting" participants in RPG games. On the networks I have access to these are usually in the 300 Mbps to 2.0 Gbps range. The volume would most certainly be very debilitating for the target, and sometimes their neighbors, but for the most part doesn't cause overall problems for the network. The sources are very diverse.

Unfortunately I do not have an ability to get packets of any of these attacks, but I am questioning whether this traffic is actually destined for port 0 or if it is actually fragmentation attacks that are being interpreted as source port 0 traffic.

Jim Macleod at lovemytool.com does an excellent job of describing what I am suspecting.

If anyone has packets available from one of these attacks, I would love to review them.

-- Rick Wanner MSISE - rwanner at isc dot sans dot edu - http://namedeplume.blogspot.com/ - Twitter:namedeplume (Protected)

2 Comments

Microsoft Azure offline

We are receiveing reports of an Azure outage. This is affecting Microsoft DNS, XBOX and other services. Thanks to Nick and Steve for reporting the outage. More information is available here:

http://www.theregister.co.uk/2013/11/21/azure_blips_offline_again/

1 Comments

Are large scale Man in The Middle attacks underway?

Renesys is reporting two separate incidents where they observed traffic for 1500 IP blocks being diverted for extended periods of time. They observed the traffic redirection for more than 2 months over the last year. Does it seem unusual for internet traffic between Ashburn Virginia (63.218.44.78) and Washington DC (63.234.113.110) to go through Russia to Belarus? That is exactly what they observed. Once traffic flows through your routers there are countless opportunities to capture and modify the traffic with classic MiTM attacks. In my humble opinion we should put very little stock in the safety of SSL traffic as it flows through them. Attacks such as the SSL Crime attack, Oracle Padding attacks, Beast and others have shown SSL to be untrustworthy in circumstances such as this.

Advertising false BGP routes to affect the flow of traffic isn't new. You may remember when Pakistan "accidently" took down Youtube for a small portion of the internet when they attempted to blackhole the website within their country. (Maybe they knew the "twerking" fad was coming) But this is an excellent article that documents two cases where it has happened for extended periods of time.

http://www.renesys.com/2013/11/mitm-internet-hijacking/

Shameless self promotion:

Build a custom penetration testing backdoor that evades antivirus! Write your own SQL Injection, Password attack tools and more. Want to code your own tools in Python? Check out SEC573 Python for Penetration Testers. I am teaching it in Reston VA March 17th! Click HERE for more information.

Follow me on twitter? @MarkBaggett

1 Comments

"In the end it is all PEEKS and POKES."

At SANS Hackfest Penetration Testing summit I had the pleasure of reminiscing with Jedi Master Ed Skoudis about assembly language on our old Commodore 64s. Then Ed made one of his typical profound statements. He said, "In the end, it is all peeks and pokes." On the Commodore 64 the PEEK command was use to read from memory. The POKE command was used to write a value to memory. Ultimately that is all we need to be able to control any process on any computer.

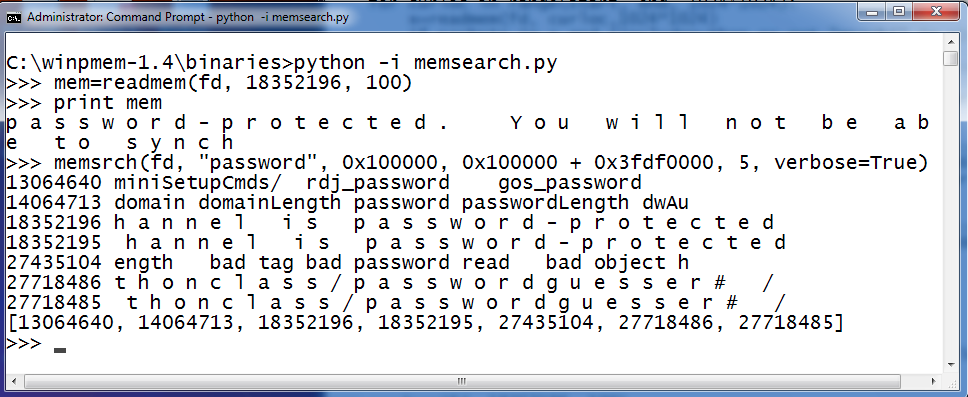

Doing a PEEK in live memory is easy with winpmem. I've already shown you how you can use Python and winpmem to read live memory. If you missed the article click here to take a peek. (Pun intended) Today I'm going to show you how to poke. Not a Facebook poke; a Commodore 64 poke. Which is, of course, much much cooler. You see, winpmem can also write to anywhere in memory that you choose.

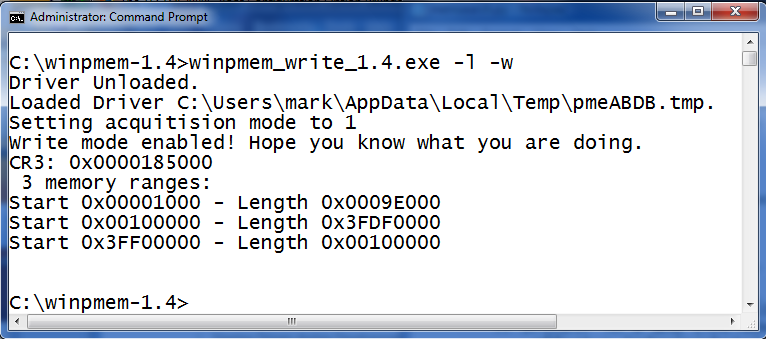

Winpmem has two different device drivers. One is used for read only access to memory. The read only device driver is installed by default when you use the "-L" option. This is the device driver of choice for capturing forensics images. The other driver is used for read and write access to memory. To install the write driver you run "winpmem_write_1.4.exe" and specify the "-L" and the "-W" option.

Winpmem will indicate that write mode is enabled and gives you a friendly warning by saying, "Hope you know what your doing." Well, ignorance has never stopped me. But, it is wise to note that are should save what you are doing before experimenting with this. You are using a device driver (that is running in Kernel memory) and you can write to anywhere you want to in memory. That includes KERNEL memory space. You can very easily render your machine unusable, blue screen your box or worse.

In yesterdays diary I showed you how you could read memory by calling two python functions. Writing to memory also only requires two functions. First you call win32file.SetFilePointer() to set the address you want to update. Then you call win32file.WriteFile() to write data. To make the process even simpler I'll add a writemem() function to the memsearch.py script I wrote yesterday. I add the following lines to yesterdays script: (Email me if you want a copy of the script or grab a copy from yesterday diary)

def writemem(fd,location, data):

win32file.SetFilePointer(fd, location, 0 )

win32file.WriteFile(fd, data)

return

Then I start the script and a Python interactive shell by running "python -i memsearch.py". Then I can use the memsrch() function to search for interesting data to update. In this case I am searching for the string "Command Prompt - python" in hopes of updating the titlebar for my command prompt. It finds the string at several addresses in memory. Then I can call writemem() and update the string. When you provide a string to write to memory keep in mind that strings in memory may be in ASCII, UTF-16LE OR UTF-16BE format. In this example I wrote "P0wned Shell" in UTF-16le format to the memory address 40345926. But it didn't update my command prompt title bar. Darn. Windows has several memory locations that contain that string. So I use readmem() to check the memory address to see if it changed. I can see that it did! The memory address contains the updated string.

.png)

One of those others addresses that I didn't change must contained our command prompts title bar. memsrch() returns a list of all the addresses that contain the search string. So I could do something like this to change all of the instances of that string between two given addresses.

for addr in memsrch(fd, "Command Prompt - python", 0x100000, 3fef0000, 1000):

writemem(fd, addr, "p0wned shell".encode("utf-16le"))

But as I said, be careful. I've spent as much time rebooting my machine as I have actually writing code. A better approach is to import Volatility into your script so you can parse memory intelligently and update memory data structures rather than just guessing.

In their excelent paper titled Anti-Forensics resilient memory acquisition, Johannes Stuttgen and Michael Cohen discuss how making small changes to magic values in memory data structures can defeat tools like Volatility. If you have a chance give the paper a read. It is here.

If that sounds interesting to you and you want to know more about Python programming check out SEC573 Python for Penetration Testers. I am teaching it in Reston VA March 17th! Click HERE for more information.

Follow me on twitter? @MarkBaggett

0 Comments

Searching live memory on a running machine with winpmem

Winpmem may appear to be a simple a memory acquisition tool, but it is really much more. In yesterday's diary I gave a brief introduction to the tool and showed how you can use it to create a raw memory image. If you didn't see that article check it out for the background needed for today's installment. You can read it here.

One of my favorite parts of Winpmem is that it has the ability to analyze live memory on a running computer. Rather than dumping the memory and analyzing it in two seperate steps you can search for memory on a running system. Of course this will affect other forensics artifacts so you only want to use this on backup copies of your evidence. But this is also useful outside of forensics. There are all kinds of useful ways to use this tool. Searching for memory is useful to security reasearchers. You can search memory for strings that are in common pieces of malware. Do software vendors tell you that they encrypt sensative data in memory? You can use this tool to see if that is really true. So how do you do it?

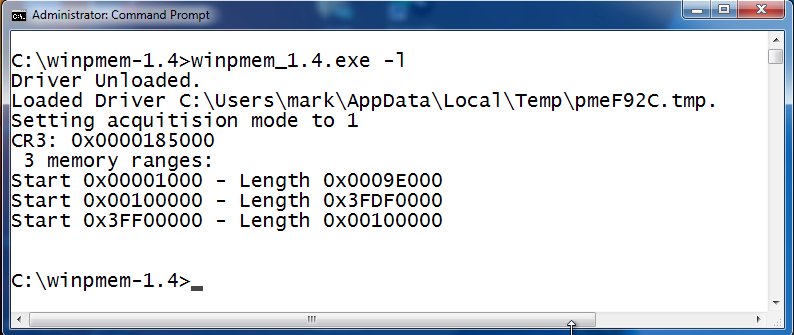

Winpmem allows you to install the memory access device driver and then use it in your own Python scripts. To install the device driver run winpmem with the -L option like so:

Once the driver is installed it will create a \\.\pmem device object that you can open with Python. You will notice that it also reports 3 memory ranges. These are the ranges of physical memory addreses where the operating system stores data. In this case the first memory range starts at 0x1000 and has a length of 0x9e000. So it ends at memory address 0x9f000. The second range starts at 0x100000 and has a length of 0x3fdf0000. This leaves a conspicuous gap between the ranges. These gaps are used by the processor and other chipsets on your computer and aren't directly addressible by the Windows memory manager. Memory acquisition tools will only dump those ranges of memory used by the OS. This could lead to some interesting research where malware hides in those memory blind spots. Winpmem also has the ability to read those reserved areas of memory but doing so may lock up your machine.

To read memory it will require a little bit of Python code, but winpmem does the hard part for you. You can read memory with only 4 lines of Python code. All we have to do is import winpmem, create a handle to a file with win32file.CreateFile (the "fd" variable in the program below). Then use win32file.SetFilePointer() to point to the address you want to read. Finally call win32file.ReadFile() to read the bytes at that memory location. Thats it! So I threw together a quick script to allow me to search memory. I called this script "memsearch.py" when I saved it to my computer.

from winpmem import *

def readmem(fd, start, size):

win32file.SetFilePointer(fd, start, 0 )

x,data = win32file.ReadFile(fd, size)

return data

def memsrch(fd, srchstr,start, end, numtofind=1, margins=20,verbose=False,includepython=False):

srchres=[]

for curloc in range(start, end, 1024*1024):

x=readmem(fd, curloc,1024*1024)

if srchstr in x and (includepython or not "msrch(" in x):

offset=x.index(srchstr)

if verbose:print curloc+offset,str(x[offset-margins:offset+len(srchstr)+margins])

srchres.append(curloc+x.index(srchstr))

if srchstr.encode("utf-16le") in x and (includepython or not "msrch(".encode("utf-16le") in

x):

offset=x.index(srchstr.encode("utf-16le"))

if verbose:print curloc+offset,str(x[offset-margins:offset+(len(srchstr)*2)+margins])

srchres.append(curloc+x.index(srchstr.encode("utf-16le")))

if srchstr.encode("utf-16be") in x and (includepython or not "msrch(".encode("utf-16be") in

x):

offset=x.index(srchstr.encode("utf-16be"))

if verbose:print curloc+offset,str(x[offset-margins:offset+(len(srchstr)*2)+margins])

srchres.append(curloc+x.index(srchstr.encode("utf-16be")))

if len(srchres)>=numtofind:

break

return srchres

fd = win32file.CreateFile(r"\\.\pmem",win32file.GENERIC_READ |

win32file.GENERIC_WRITE,win32file.FILE_SHARE_READ |

win32file.FILE_SHARE_WRITE,None,win32file.OPEN_EXISTING,win32file.FILE_ATTRIBUTE_NORMAL,None)

After writing the program I run python with the "-i" option so that I am dropped into an interactive shell with the new modules and variables already defined. I'll get an interactive Python prompt that I can use to call the memsrch() and readmem() functions. Because it is in a shell I can run multiple searches with different options until I find what I am looking for. Here is a short example calling each function once, In this case I read 100 bytes of memory starting at 18352196. Then I search for 5 occurences of the word "password" beginning at the address 0x100000.

readmem() is used to read data from memory. The readmem() function takes 3 parameters. The first is fd. This is the file handle that points to the \\.\pmem device created by the winpmem device driver. The 2nd parameter is the address to start reading from and the 3rd parameter is how much data to read.

memsrch() can be used to search memory for the string you specify. The memsrch() function takes several options. The first is again fd. The 2nd parameter is the search tearm to find in memory. The 3rd parameter is the starting address to being searching and the 4th parameter is the ending address. The rest of the parameters are optional. The 5th parameter is the number of matches to find in memory. The 6th parameter is used to turn on Verbose searching. If verbose is true the matching strings are printed to the screen. The last argument is the "includepython" argument. If includepython is set to True then it will allow the Python script to find itself as it searches through memory for matches.

Keep in mind that you are searching Physical memory. This is much different that virtual OS memory. Things may move around and you may find things in places where you do not expect them. To get a better understanding of how memory is being used by the OS read this excellent paper that accompanies the tool.

http://dfrws.org/2013/proceedings/DFRWS2013-13.pdf

Still not convinced of winpmem's awesomeness? There is more to it. I'll look at more tomorrow.

Do you want to learn how to do all sorts of cook stuff with Python? Check out SEC573 Python for Penetration testers! I am teaching it in Reston VA March 17th! Click HERE for more information.

Follow me on twitter? @MarkBaggett

0 Comments

vBulletin.com Compromise - Possible 0-day

Earlier today, vBulletin.com was compromised. The group conducting the attack claims to have a 0-day available that enabled the attacker to execute shell commands on the server. The attacker posted screen shots as proof and offered the exploit for sale for $7,000.

If you run vBulletin:

- carefully watch your logs.

- ensure that you apply all hardening steps possible (anybody got a good pointer to a hardening guide?)

- keep backups of your database and other configuration information

- if you can: log all port 80 traffic to your bulletin.

If you had an account on vBulletin.com, make sure you are not reusing the password. The attackers claimed to have breached macrumors.com as well. According to macrumors, that exploit was due to a shared password. There is a chance that the 0-day exploit is fake and shared passwords are the root cause.

Any other ideas?

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

1 Comments

Winpmem - Mild mannered memory aquisition tool??

There should be little argument that with today's threats you should always acquire a memory image when dealing with any type of malware. Modern desktops can have 16 gigabytes of RAM or more filled with evidence that is usually crutial to understanding what was happening on that machine. Failure to acquire that memory will make analyzing the other forensic artifacts difficult or in some cases impossible. Chad Tilbury (@chadtilbury) recently told me about a new memory acquisition tool that I want to share with the ISC readers. It is called winpmem. It is written by Michael Cohen. It is free and it is available for download here. Here is a look at it.

After downloading and expanding the zip file you will see the following components:

.png)

You can see there are two executables. They are named winpmem_1.4.exe and winpmem_write_1.4.exe. I'll come back to winpmem_write_1.4.exe later. There is also a "binaries" directory that includes a couple of device drivers and a Python script. That sounds like fun! I'll come back to that one later as well. For now, lets talk about winpmem_1.4.exe. If you run it without any parameters you will get a help screen. It looks like this:

.png)

If you want to use winpmem to acquire a raw memory image, all you have to do is provide it with a filename. A copy of all the bytes in memory will be saved to that file. For example:

c:\> winpmem_1.4.exe memory.dmp

This will create a raw memory image named "memory.dmp" suitable for analysis with Volatility, Mandiants Redline and others. The tool can also create a crash dump that is suitable for analysis with Microsoft WinDBG. To do so you just add the "-d" option to your command line like this:

c:\> winpmem_1.4.exe -d crashdump.dmp

Now, some of you may be thinking, "So what! I can already dump memory with dumpit.exe, Win32dd.exe, win64dd.exe and others." Well, you are right. But if you have malware that is looking for those tools, now you have another option. While winpmem might look like a mild mannered memory acquisition tool, it actually has super powers. The BEST part of winpmem (IMHO) is in those components that I conveniently glazed over. I'll take a look at winpmem_write_1.4.exe and, better yet, that Python script in my next journal entry.

Interest in Python? Check out SANS SEC573. Python for Penetration testers! I am teaching it in Reston VA March 17th!

Click HERE for more information.

Follow me on twitter? @MarkBaggett

5 Comments

Updated dumpdns.pl

I exchanged some e-mail today with reader, Curtis and as result have fixed a typo and added some error checking to handle a problem that he was seeing (though I didn't, I suspect it has to do with different installed versions of some of the Perl packages, so I'll continue to look into the problem and will probably release another update in the next few days). Version 1.5.1 can be found here: http://handlers.sans.edu/jclausing/ipv6/dumpdns.pl

---------------

Jim Clausing, GIAC GSE #26

jclausing --at-- isc [dot] sans (dot) edu

0 Comments

Am I Sending Traffic to a "Sinkhole"?

It has become common practice to setup "Sinkholes" to capture traffic sent my infected hosts to command and control servers. These Sinkholes are usually established after a malicious domain name has been discovered and registrars agreed to redirect respective NS records to a specific name server configured by the entity operating the Sinkhole. More recently for example Microsoft gained court orders to take over various domain names associated with popular malware.

Once a sinkhole is established, it is possible for the operator of the sinkhole to collect IP addresses from hosts connecting to it. In many cases, a host is only considered "infected" if it transmits a request that indicates it is infected with a specific malware type. A simple DNS lookup or a connection to the server operating on the sinkhole should not suffice and be considered a false positive.

The data collected by sinkholes is typically used for research purposes, and to notify infected users. How well this notification works depends largely on the collaboration between the sinkhole operator and your ISP.

On the other hand, you may want to proactively watch for traffic directed at sinkholes. However, there is no authoritative list of sinkholes. Sinkhole operators try not to advertise the list in order to prevent botnet operators from coding their bots to avoid sinkholes, as well as to avoid revenge DoS attacks against the networks hosting sinkholes. Some ISPs will also operate their own Sinkholes and not direct traffic to "global" sinkholes to ease and accelerate customer notification.

And of course, you can always setup your own sinkhole, which is probably more effective then watching for traffic to existing sinkholes: See Guy's paper for details http://www.sans.org/reading-room/whitepapers/dns/dns-sinkhole-33523

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

3 Comments

Sagan as a Log Normalizer

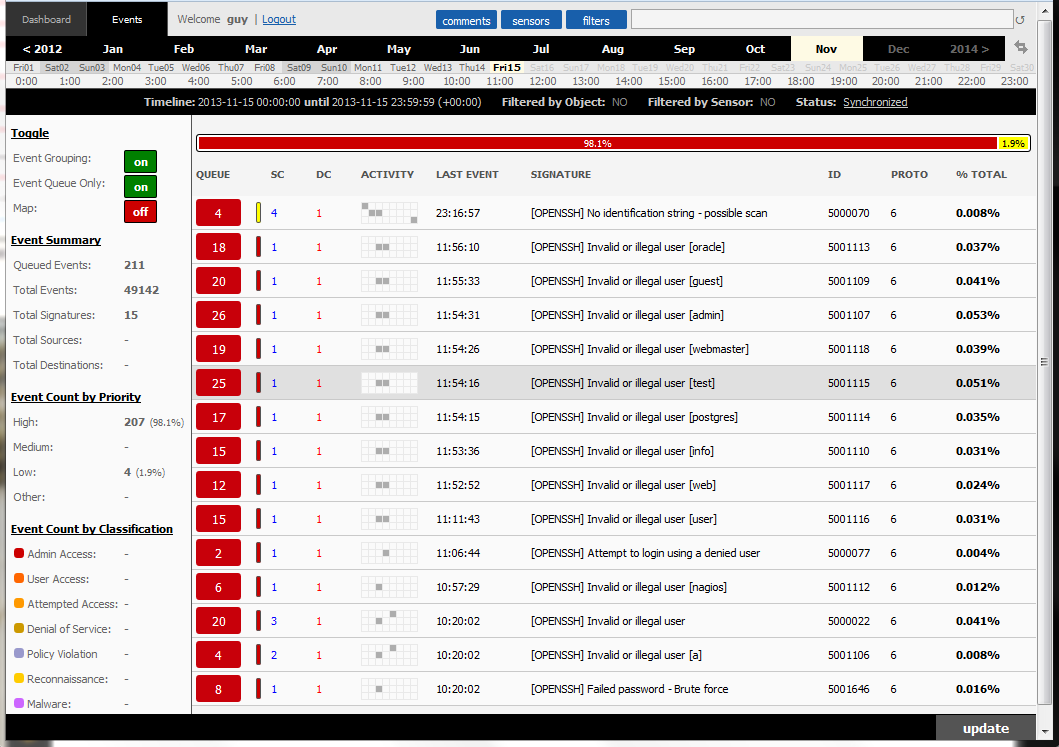

"Sagan is an open source (GNU/GPLv2) high performance, real-time log analysis & correlation engine that run under *nix operating systems (Linux/FreeBSD/ OpenBSD/etc)."[1]

Sagan is a log analysis engine that uses structure rules with the same basic structure as Snort rules. The alerts can be written to a Snort IDS/IPS database in the Unified2 file format using Barnyard2. This mean the alerts can be read using Sguil, BASE or SQueRT to name a few. It is easy to setup, just need to forward via Syslog the data to a system which has Sagan installed and connected via Barnyard2 to a database (i.e. Sguil). Just follow the Howto documentation to build and install it.

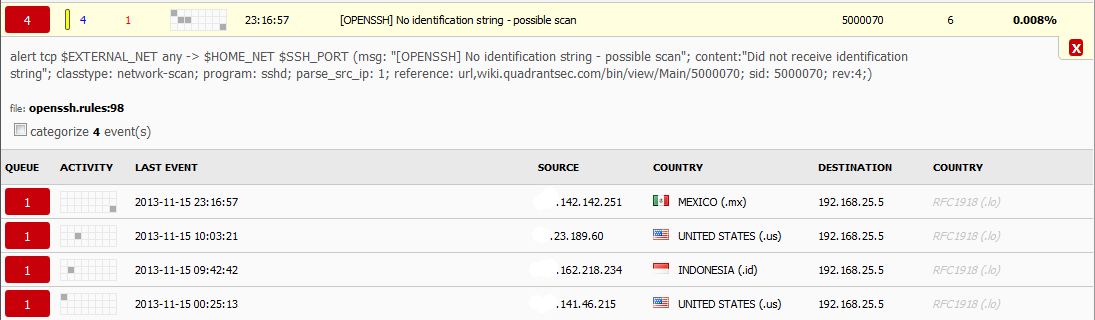

Here is an example of an external gateway monitoring various SSH account breaking attempts being logged by Sagan rules and the alerts sent to a Sguil database.

Expanding the first event "[OPENSSH] No identification string - possible scan", you can now view (in SQueRT) the rule that trigger these events and a summary of the events by the time it happened, the source and the target.

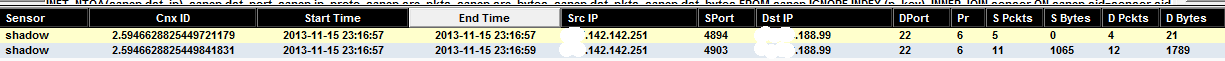

In order to further examine this activity, l need to login in Sguil and search the IP of the first event (xxx.142.142.251) using sancp as a source address to find if any other related activity has been recorded from this source. The sancp log shows that 2 separate connection were made to access SSH which access is restricted to a number of addresses.

Using Sagan is another way of leveraging a Snort IDS database infrastructure to collect, correlated and monitor suspicious events via syslog. For additional information on Sagan, check the Sagan Wiki.

[1] http://sagan.quadrantsec.com/

[2] https://wiki.quadrantsec.com/twiki/bin/view/Main/SaganMain

[3] https://wiki.quadrantsec.com/twiki/bin/view/Main/SaganRuleReference

[4] https://wiki.quadrantsec.com/twiki/bin/view/Main/SaganInstall

[5] http://sagan.quadrantsec.com/rules/

[6] https://github.com/beave/sagan

-----------

Guy Bruneau IPSS Inc. gbruneau at isc dot sans dot edu

0 Comments

The Security Impact of HTTP Caching Headers

Earlier this week, an update for Media-Wiki fixed a bug in how it used caching headers [2]. The headers allowed authenticated content to be cached, which may lead to sessions being shared between users using the same proxy server. I think this is a good reason to talk a bit about caching in web applications and why it is important for security.

First off all: If your application is https only, this may not apply to you. The browser does not typically cache HTTPS content, and proxies will not inspect it. However, HTTPS inspecting proxies are available and common in some corporate environment so this *may* apply to them, even though I hope they do not cache HTTPS content.

It is the goal of properly configured caching headers to avoid having personalized information stored in proxies. The server needs to include appropriate headers to indicate if the response may be cached.

Caching Related Response Headers

Cache-Control

This is probably the most important header when it comes to security. There are a number of options associated with this header. Most importantly, the page can be marked as "private" or "public". A proxy will not cache a page if it is marked as "private". Other options are sometimes used inappropriately. For example the "no-cache" option just implies that the proxy should verify each time the page is requested if the page is still valid, but it may still store the page. A better option to add is "no-store" which will prevent request and response from being stored by the cache. The "no-transform" option may be important for mobile users. Some mobile providers will compress or alter content, in particular images, to save bandwidth when re-transmitting content over cellular networks. This could break digital signatures in some cases. "no-transform" will prevent that (but again: doesn't matter for SSL. Only if you rely on digital signatures transmitted to verify an image for example).The "max-age" option can be used to indicate how long a response can be cached. Setting it to "0" will prevent caching.

A "safe" Cache-Control header would be:

Cache-Control: private, no-cache, no-store, max-age=0

Expires

Modern browsers tend to rely less on the Expires header. However, it is best to stay consistent. A expiration time in the past, or just the value "0" will work to prevent caching.

ETag

The ETag will not prevent caching, but will indicate if content changed. The Etag can be understood as a serial number to provide a more granular identifcation of stale content. In some cases the ETag is derived from information like file inode numbers that some administrators don't like to share. A nice way to come up with an Etag would be to just send a random number, or not to send it at all. I am not aware of a way to randomize the Etag.

Pragma

Thie is an older header, and has been replaced by the "Cache-Control" header. "Pragma: no-cache" is equivalent to "Cache-Control: no-cache".

Vary

The "vary" header is used to ignore certain header fields in requests. A Cache will index all stored responses based on the content of the request. The request consist not just of the URL requested, but also other headers like for example the User-Agent field. You may decide to deliver the same content independent of the user agent, and as a result, "Vary: User-Agent" would help the proxy to identify that you don't care about the user agent. For out discussion, this doesn't really matter because we never want the request or response to be cached so it is best to have no Vary header.

In summary, a safe set of HTTP response headers may look like:

Cache-Control: private, no-cache, no-store, max-age=0, no-transform Pragma: no-cache Expires: 0

The "Cache-Control" header is probably overdone in this example, but should cover various implementations.

A nice tool to test this is ratproxy, which will identify inconsistent cache headers [3]. For example, ratproxy will alert you if a "Set-Cookie" header is sent with a cachable response.

Anything I missed? Any other suggestions for proper cache control?

References:

[1] http://www.ietf.org/rfc/rfc2616.txt

[2] https://bugzilla.wikimedia.org/show_bug.cgi?id=53032

[3] https://code.google.com/p/ratproxy/

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

3 Comments

Google Drive Phishing

In the past we have seen malware being delivered via Google Docs. You would receive an email stating that a document had been shared and when you clicked the link bad things would start to happen. In recent weeks the same approach has increasingly been used to Phish. You would receive an email along these lines:

Hello,We sent you an attachment about your booking using Google DriveI have sent the attachment for you using Google Drive So Click the Google Drive link belowto view the attachment..<button>Google Drive</button>Once the link is clicked you are sent through to a web site where you are presented with the following screen:

Clicking on any of these will ask you for a userid and password for that service. The link in the email should be easily recognised by people as obviously not being a Google link, but many still do not check this. If you are doing an awareness campaign or reminder, maybe include some info on recognising phishing links.

Cheers

Mark

4 Comments

Setting up Honeypots

Most if not all of the handlers run honeypots, sinkholes, SPAM traps, etc in various locations around the planet. As many of you are aware they are a nice tool to see what is going on on the Internet at a specific time. Setting up a new server the other day it was interesting to see how fast it was touched by evilness. Initially it wasn't even intended as a honeypot, but it soon turned into one when "interesting" traffic started turning up. Now of course mixing business (servers original intended use) and pleasure (honeypot) aren't a good thing, so honeypot it is.

It was quite disheartening to see how fast evilness turned up:

- SSH brute force attacks port 22 < 2minutes

- SSH brute force attacks port 2222 < 4 hours

- Telnet - 8 Minutes

- Coldfusion checks ~ 30 minutes

- SQLi Check ~ 15 minutes

- Open Proxy check 3128 - 81 minutes

- Open Proxy Check 80 - 35 minutes

- Open proxy check 8080 - 48 minutes

Which got me thinking about a few things and hence this post. There are two things I'm interested in firstly when running Honeypots what do you use? There are some great resources and different tools, so what works for you. This one I just set up using the 404 project components from this site. I used Kippo for 2222 and for the rest I used actual product configured to bounce pretty much every request. It doesn't get me exactly what they are doing, but it gives me a first indication, plus I ran out of time :-(

The second thing I'd like to know is, when you set up the Honeypot for the first time how long did it take to get a hit? On our site we have a survival time. It would be interesting to know what the survival time for SSH, FTP, telnet, proxies etc is. So the next time you set up a honey pot, or if you still have the logs going back that far take a look and share. SSH with a default password less than 2 minutes. What are your stats?

Cheers

Mark

(PS if you are going to set one up, make sure you fully understand what you are about to do. You are placing a deliberately vulnerable device on the internet. Depending on your location you may be held liable for stuff that happens (IANAL). It it gets compromised, make sure it is somewhere where it can't hurt you or others. )

11 Comments

Packet Challenge for the Hivemind: What's happening with this Ethernet header?

Earlier this week, a user submitted one of those "odd packets" we all like. The packet was acquired with tcpdump, without the "-x" or "-X" option, but still, tcpdump decided to dump the entire packet in hexadecimal. I have seen tcpdump do things like this before, and usually attributed it to "packet overload". If I have tcpdump write the same traffic to disk (using the -w option) and later read it back with -r, I don't see this questionable traffic.

But I never bothered to really look into it. So today, returning from the dentist and under the influence of Novacaine after crown prep, I decided what better thing to do but to play a bit with packets.

Here is the setup:

I am running tcpdump on my firewall. I have it listen on all interfaces. The exact command line:

sudo tcpdump -i any -nn -xx not ip and not ip6 and not arp

Now if I got this filter right, I should see no IPv4, no IPv6 and no ARP . At first, I got packets like this:

21:39:55.404619 Out 00:e0:4c:68:e0:7d ethertype Unknown (0x0003), length 344: 0x0000: 0004 0001 0006 00e0 4c68 e07d 0000 0003 0x0010: 4510 0148 0000 0000 8011 2e93 0a05 00fe 0x0020: ffff ffff 0043 0044 0134 cb27 0201 0600 0x0030: 1223 3456 0000 8000 0000 0000 0a05 004a 0x0040: 0a05 00fe 0000 0000 000e f316 a4a6 0000 0x0050: 0000 0000 0000 0000 0000 0000 0000 0000 0x0060: 0000 0000 0000 0000 0000 0000 0000 0000 (removed remainder: all "0").

Interestingly, the packet has an "off" ethernet header that looks like it got an additional two bytes, followed by what looks like a normal IPv4 header.

On a second attempt, using the same filter, I even got some packets that got interpreted as IPv4, even though my filter should exclude them:

21:44:01.919690 IP 10.128.0.11.56559 > 10.5.1.12.80: Flags [.], ack 421172865, win 403, length 0 0x0000: 0000 0001 0006 8ab0 1e25 1fcb 0000 0800 0x0010: 4500 0028 b78a 4000 4006 6daa 0a80 000b 0x0020: 0a05 010c dcef 0050 69b5 295b 191a 9681

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

7 Comments

Adobe, Google and other Patch Tuesday patches

Adobe

Adobe published two advisories today:

(Correction: APSB13-25 was released last month, and I have removed it from this diary. Instead, APSB13-27 was added below)

APSB13-26: Security Updates for Flash Player

This update affects the Windows, OS X as well as the Linux version of Adobe Flash Player 11.9 (11.2 for Linux) , as well as Adobe Air 3.9. The Flashplayer vulnerability is assigned a priority of "1" on Windows and OS X which indicates an exploit has been sighted in the wild and Adobe recommends patch "as soon as possible" (72 hrs).

Vulnerabilities that are covered by this patch: CVE-2013-5329, CVE-2013-5330.

APSB13-27: Hotfix for Coldfusion

This hotfix affects Coldfusion 9 as well as 10. Adobe assigned it a priority of 1 for Coldfusion 10 and 2 for Coldfusion 9.x . The hotfix patches two vulnerabilities:

1 - A reflective XSS vulnerability in Coldfusion 9/10 (CVE-2013-5326)

2 - An authentication bypass problem in Coldfusion 10 (CVE-2013-5328)

The second vulnerability which allows unauthorized remote read access is probably the reason this hotfix is rated "1" for Coldfusion 10.

Google released a new version of Chrome today: Chrome 31. The update includes 25 security fixes. Not exactly a security fix, but still interesting: Chrome 31 improves the SSL ciphers by adding support for the AES-GCM ciphers.

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

6 Comments

November 2013 Microsoft Patch Tuesday

Overview of the November 2013 Microsoft patches and their status.

| # | Affected | Contra Indications - KB | Known Exploits | Microsoft rating(**) | ISC rating(*) | |

|---|---|---|---|---|---|---|

| clients | servers | |||||

| MS13-088 |

Cumulative Security Update for Internet Explorer (ReplacesMS13-080 ) |

|||||

|

Internet Explorer CVE-2013-3891 CVE-2013-3908 CVE-2013-3909 CVE-2013-3910 CVE-2013-3911 CVE-2013-3912 CVE-2013-3914 CVE-2013-3915 CVE-2013-3916 CVE-2013-3917 |

KB 2888505 | No. |

Severity:Critical Exploitability: 1,2,3 |

Critical | Important | |

| MS13-089 |

Remote Code Execution Vulnerability in Windows Graphics Device Interface (ReplacesMS08-071 ) |

|||||

|

GDI+ CVE-2013-3940 |

KB 2876331 | No. |

Severity:Critical Exploitability: 1 |

Critical | Important | |

| MS13-090 |

Remote Code Execution Vulnerability in InformationCardSigninHelp ActiveX Class (ReplacesMS11-090 ) |

|||||

|

ActiveX (icardie.dll) CVE-2013-3918 |

KB 2900986 | Yes. |

Severity:Critical Exploitability: 1 |

PATCH NOW! | Important | |

| MS13-091 |

Remote Code Execution Vulnerability in Microsoft Office (ReplacesMS09-073 ) |

|||||

|

Microsoft Office (Word) CVE-2013-0082 CVE-2013-1324 CVE-2013-1325 |

KB 2885093 | No. |

Severity:Important Exploitability: 1,3 |

Critical | Important | |

| MS13-092 |

Elevation of Privileges Vulnerability in HyperV |

|||||

|

HyperV Guests (DoS for Host) CVE-2013-3898 |

KB 2893986 | No. |

Severity:Important Exploitability: 1 |

Important | Important | |

| MS13-093 |

Information Disclosure Vulnerability in Ancillary Function Driver (ReplacesMS12-009 ) |

|||||

|

Ancillary Function Driver CVE-2013-3887 |

KB 2875783 | No. |

Severity:Important Exploitability: 3 |

Important | Important | |

| MS13-094 |

Information Disclosure Vulnerability in Outlook (ReplacesMS13-068 ) |

|||||

|

Outlook CVE-2013-3905 |

KB 2894514 | No. |

Severity:Important Exploitability: 3 |

Important | Less Important | |

| MS13-095 |

Denial of Service Vulnerability in Digital Signatures (ReplacesAdvisory 2661254 ) |

|||||

|

Digital Signatures CVE-2013-3869 |

KB 2868626 | No. |

Severity:Important Exploitability: 3 |

N/A | Important | |

We appreciate updates

US based customers can call Microsoft for free patch related support on 1-866-PCSAFETY

-

We use 4 levels:

- PATCH NOW: Typically used where we see immediate danger of exploitation. Typical environments will want to deploy these patches ASAP. Workarounds are typically not accepted by users or are not possible. This rating is often used when typical deployments make it vulnerable and exploits are being used or easy to obtain or make.

- Critical: Anything that needs little to become "interesting" for the dark side. Best approach is to test and deploy ASAP. Workarounds can give more time to test.

- Important: Things where more testing and other measures can help.

- Less Urgent: Typically we expect the impact if left unpatched to be not that big a deal in the short term. Do not forget them however.

- The difference between the client and server rating is based on how you use the affected machine. We take into account the typical client and server deployment in the usage of the machine and the common measures people typically have in place already. Measures we presume are simple best practices for servers such as not using outlook, MSIE, word etc. to do traditional office or leisure work.

- The rating is not a risk analysis as such. It is a rating of importance of the vulnerability and the perceived or even predicted threat for affected systems. The rating does not account for the number of affected systems there are. It is for an affected system in a typical worst-case role.

- Only the organization itself is in a position to do a full risk analysis involving the presence (or lack of) affected systems, the actually implemented measures, the impact on their operation and the value of the assets involved.

- All patches released by a vendor are important enough to have a close look if you use the affected systems. There is little incentive for vendors to publicize patches that do not have some form of risk to them.

(**): The exploitability rating we show is the worst of them all due to the too large number of ratings Microsoft assigns to some of the patches.

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

3 Comments

OpenSSH Vulnerability

OpenSSH announced that OpenSSH 6.2 and 6.3 are vulnerable to an authenticated code execution flaw. The vulnerability affects the AES-GCM cipher. As a quick fix, you can disable the cipher (see the URL below for details). Or you can upgrade to OpenSSH 6.4.

A user may bypass restrictions imposed to the users account by exploiting the flaw, but the user needs valid credentials to take advantage of the flaw.

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

1 Comments

What Happened to the SANS Ads?

You may have noticed that the "ad" frame we use in the top right corner has been empty for the last couple days. Oddly, we didn't get a lot of complaints about that ;-)

The reason is pretty simple: The SANS ads are included via an iframe. However, iframes, as Smit B. Shah pointed out in an e-mail to the SANS webmaster, can also be used in clickjacking attacks. So we decided to implement a simple anti-clickjacking defense by adding the "X-Frame-Options: SAMEORIGIN" header to all sans.org pages. Of course, "isc.sans.edu" is not "sameorigin" and the ads no longer show up if your browser supports the header.

Yes, there are Javascript tricks to prevent clickjacking, but they are far from reliable. If you still see the ads: You probably should use a newer browser. Of course, we will exempt some pages (like the ads ;-) ) from the header in the future, but for now figured that adding the header is more important then showing ads.

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

0 Comments

Microsoft and Facebook announce bug bounty

Microsoft and Facebook under the auspices of HackerOne have announced a bug bounty program for the key applications that power the Internet. The bounty covers a wide range of applications from the sandboxes in popular browsers to the programming languages that power the LAMP stack, php, Perl, Ruby and Rails, to the the web servers that serve up the content, nginx and apache and others. Bounties for a successful vulnerability report are from a few hundred dollars to a couple of thousand.

I have, in the past, been on the fence over the value of bug bounties. But the recent spate of zero-day attacks have made me rethink this stance. It is clear that we need to find a way to reduce the number of vulnerabilities in software as early in the software development cycle as possible. I come from a software development background and am painfully aware of how difficult it is to avoid making mistakes in coding, and testing can never exercise all possible ways an application can be abused. I was once of the belief that code coverage tools and dynamic and static analysis tools would close that gap somewhat, but what tools do exist have not met expectations.

Until the unlikely day comes that we can ensure applications are deployed without vulnerabilities perhaps the best we can achieve are bug bounties to help stay a bit ahead of the bad guys.

-- Rick Wanner MSISE - rwanner at isc dot sans dot edu - http://namedeplume.blogspot.com/ - Twitter:namedeplume (Protected)

2 Comments

IE Zero-Day Vulnerability Exploiting msvcrt.dll

FireEye Labs has discovered an "exploit that leverages a new information leakage vulnerability and an IE out-of-bounds memory access vulnerability to achieve code execution." [1] Based on their analysis, it affects IE 7, 8, 9 and 10.

According to Microsoft, the vulnerability can be mitigated by EMET.[2][3] Additional information on FireEye Labs post available here.

[1] http://www.fireeye.com/blog/technical/2013/11/new-ie-zero-day-found-in-watering-hole-attack.html

[2] https://isc.sans.edu/forums/diary/EMET+40+is+now+available+for+download/16019

[3] http://www.microsoft.com/en-us/download/details.aspx?id=39273

-----------

Guy Bruneau IPSS Inc. gbruneau at isc dot sans dot edu

4 Comments

Microsoft Patch Tuesday Preview

Looks like next Tuesday will be another "average" patch Tuesday. We are to expect 8 total patches, 3 of which are rated "critical" with the remaining 5 "important".

There will be no patch for the TIFF vulnerability. But then again, it was just announced this week. Also, if you are running Window 7 or later, you should be safe for now from this exploit.

The three critical patches include the typical "cummulative Internet Explorer" patch and two Windows Patches. It doesn't look like any of the patches addresses specific server issues, but some of the Windows patches will affect servers as well as clients.

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

1 Comments

Rapid7 Discloses IPMI Vulnerabilities

Rapid7 today disclosed a number of vulnerabilities in Supermicro's IPMI implementation [1]. The vulnerabilities include static encryption keys as well as hard coded, non updatable, passwords. Sadly, these are typical embedded system issues, and not just common in IPMI implementations. In addition, several buffer overflow vulnerabilities are disclosed in CGI programs, some of which are accessible without authentication. For those that require authentication, the hard coded password will provide easy access.

Metasploit modules to test for these vulnerabilities are comming according to the blog post.

There is little one can do to protect an IPMI interface if the interface is needed to remotely administer the system, in particular given the backdoor fixed passwords. The best you can do is limit access to the IPMI interface via a firewall, and maybe by changing default ports if this is an option. Once exposed, an attacker will have the same access to the system as a user with physical system access. Remember that turning off a system may leave IPMI enabled unless you disconnect power or network connectivity. (Hacking Servers that are turned off)

[1] https://community.rapid7.com/community/metasploit/blog/2013/11/05/supermicro-ipmi-firmware-vulnerabilities

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

3 Comments

TIFF images in MS-Office documents used in targeted attacks

Today, Microsoft published a research note and a security advisory covering a remote code execution vulnerability (CVE-2013-3096) that can be triggered with a malformed TIFF image. According to the write-up, the vulnerability is being actively exploited in a "very limited" number of targeted attacks that involved a Word (MS-Office) document which in turn contains the malformed TIFF image.

There is no patch yet, but the two Microsoft articles contain some information on mitigation options.

8 Comments

Is your vacuum cleaner sending spam?

Past week, a story in a Saint Petersburg (the icy one, not the beach) newspaper caught quite some attention, and was picked up by The Register [1]. The story claimed that appliances like tea kettles, vacuum cleaners and iron(y|ing) irons shipped from China and sold in Russia were discovered to contain rogue, WiFi enabled chip sets. As soon as power was applied, the vacuum cleaner began trolling for open WiFi access points, and if it found one, it would hook up to a spam relay and start ... probably a sales pitch spam campaign for cheap vacuum cleaners from China?

A couple years back, we at SANS ISC were investigating a significant Christmas-time scam that involved electronic picture frames that came pre-loaded with lovely malware . Could it be that this year, we are facing an even more sinister threat? Could it be that all those festive domestic efficiency gifts that adoring husbands love to pile on their rightly unappreciative wives could, in fact, be part of an evil Chinese ploy to subvert our hearth and home with trojaned appliances?!

As The Register already reported, yes, from a technical point of view, this could work. From a cost point of view, it could also work. WiFi chipsets and associated logic are, if produced in significant quantity, down to about 3$ apiece. There is also the blog post by HaxIt [2], showing how a WiFi-enabled Transcend WiFi SD card (think "small") can be pwned, rooted, and turned into a little WiFi enabled Linux PC. The cheapest cards of this type are currently at around 20$, which is likely not cost effective yet to be used for spamming and such, but is getting close.

The real "killer" application would be such a WiFi enabled nano PC that works without external power. The specimens quoted in the Register article were all hooked up to a power source within the appliance. But .. what if these toys can draw their power from Thin Air, like RFID tags do? This is called "energy scavenging" or "energy harvesting", and is a serious research topic with lots of very useful and benign applications. But imagine it would work to power "over the air" an SD-card with WiFi and Linux. You could then stick that SD card onto the used chewing gum that is disgustingly yet conveniently already present under your chair in your local Starbucks .. and that's all you'll need to have a relay, bot, whatever you want. It won't be fast, but hey, with the right P2P design, a couple of these cards would probably beat TOR in terms of anonymity and isolation any day.

I did a couple of back-of-the-envelope calculations, and I don't quite think that "energy harvesting" by drawing on the power radiated by the WiFi access point alone will work for WiFi enabled chipsets just yet. WiFi transmissions are quite power hungry, and the path loss over thin air is significant at the frequencies where WiFi operates. [Fellow amateur radio geeks might remember the 20*log10(4*pi*distance/lambda) equation :)]. I would love to be proven wrong though - if you have a WiFi design that draws its sole power from RF, please comment below. Photovoltaic and mechanical energy sources don't count, I know that these can be done, but they don't quite offer themselves to the chewing-gum-mounted-spambot-in-Starbucks scenario just yet.

So .. while the fully autonomous SD-card based bot without external power source is maybe not feasible yet .. a WiFi enabled bot inside your vacuum cleaner, drawing power off the mains, is definitely feasible, and quite cost effective. Not that you needed yet another reason to not run an open WiFi, I hope. And your partner will probably appreciate a more meaningful Christmas gift than a vacuum cleaner anyway. Try a cast iron dutch oven or a kitchen fire extinguisher this year. They are still analog, and unlikely to be bugged or backdoored for now :-D.

[1] http://www.theregister.co.uk/2013/10/29/dont_brew_that_cuppa_your_kettle_could_be_a_spambot/

[2] http://haxit.blogspot.ch/2013/08/hacking-transcend-wifi-sd-cards.html

4 Comments

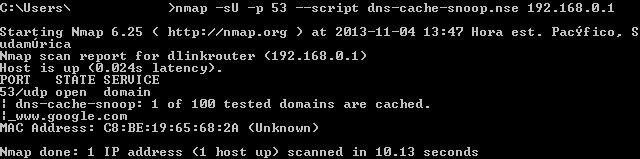

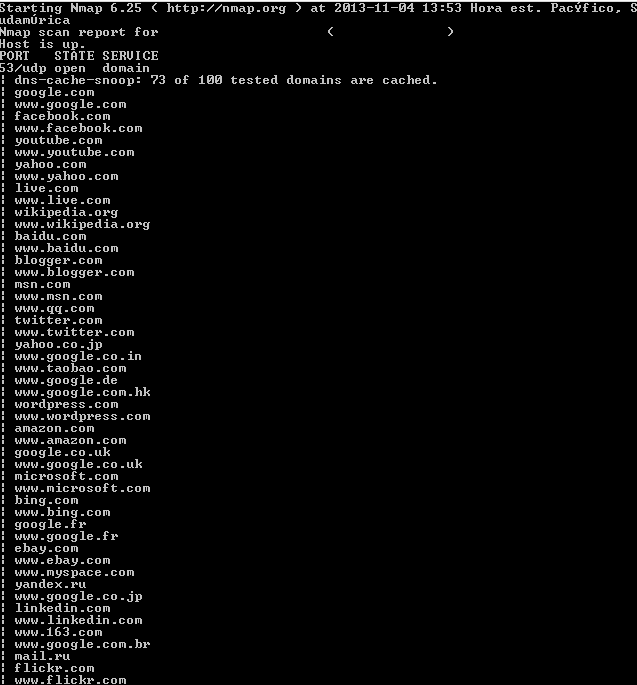

When attackers use your DNS to check for the sites you are visiting

Nowadays, attackers are definitely interested in checking what sites you are visiting. Depending on that information, they can setup attacks like the following:

- Phising websites and e-mail scams targeted to specific people so they leave their private information.

- Network spoofing with tools like dsniff, where attackers can tell computers that the sites they want to visit are located somewhere else, therefore enabling them to interact with victims posing like the original site.

One of the most widely used techniques is DNS snooping, where the attacker checks for the DNS server of the domain what queries have been performed by the internal users and therefore letting see the attacker what sites have been visited.

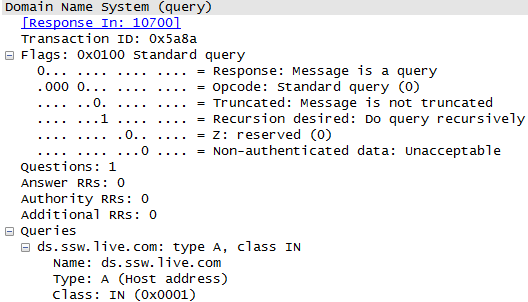

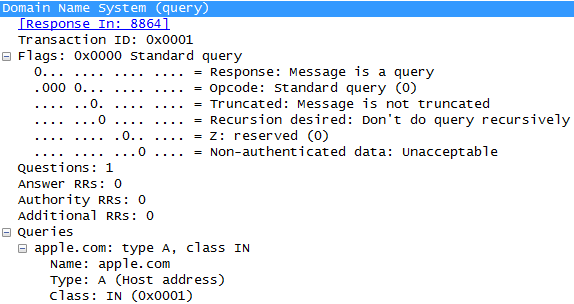

A normal DNS query has the following information:

What does mean those fields? Let's check everyone of them:

- Transaction ID: It's used to match the request and reply packets so the computer is able to identificate which answer belongs to which question.

- Response: If set to 0 is a query packet. If set to 1 is a response packet.

- Opcode: We can find the following opcodes:

| Opcode |

Description |

|---|---|

| 0 | Standard Query |

| 1 | Inverse Query |

| 2 | Server Status Request |

| 3 | Unassigned |

| 4 | Notify |

| 5 | Update |

| 6-15 | Unassigned |

- Truncated: This flag indicates if only the first 512 bytes of the response is returned. Since this is a question, it is set to 0.

- Recursion Desired (RD): Instruct the DNS server whether or not to perform recursion to look for the answer of the query.

- Z: Used in old DNS implementations. If set to 1, only answers from the primary DNS of the destination zone is accepted (Check RFC5395)

- Non authenticated Data: Used in response packets. If set in 1, indicates that all data included in the answer and authority sections of the response have been authenticated by the server according to the policies of that server. Since this is a query packet, it is set to 0.

- Questions: Indicates the number of query structures present in the packet.

- Answer Resource Record (RR): Indicates the number of entries in the answer resource record list that were returned.

- Authority RR: Indicates the number of entries in the authority resource record list that were returned.

- Additional RR: Indicates the number of entries in the additional resource record list that were returned.

- Queries: List of queries included in the packet.

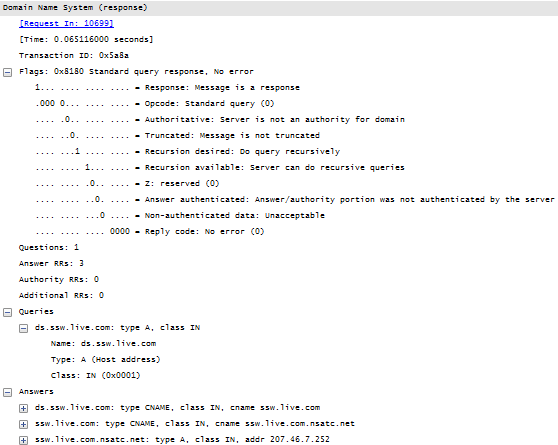

The answer of the last query is shown in the following figure:

The response code table for the DNS protocol is the following:

| Rcode | Description |

|---|---|

| 0 | No error. The request completed successfully. |

| 1 | Format error. The name server was unable to interpret the query. |

| 2 | Server failure. The name server was unable to process this query due to a problem with the name server. |

| 3 | Name Error. Meaningful only for responses from an authoritative name server, this code signifies that the domain name referenced in the query does not exist. |

| 4 | Not Implemented. The name server does not support the requested kind of query. |